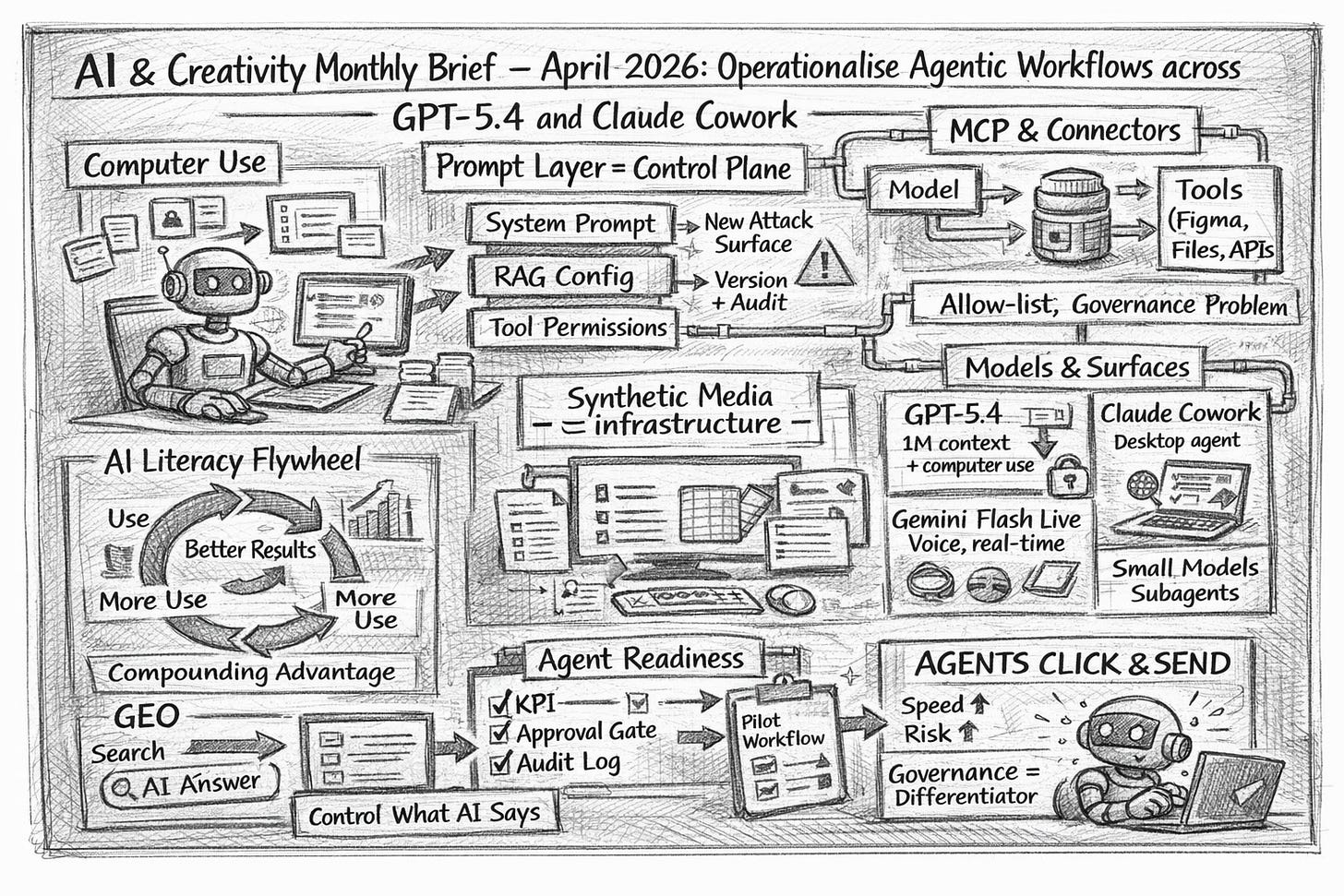

AI & Creativity Monthly Brief — April 2026: Operationalise agentic workflows across GPT‑5.4 and Claude Cowork

AI creativity is shifting from experiments to agentic tools, forcing leaders to rethink generative design, creative tooling, human-AI collab, AI governance, and synthetic media at executive scale

TL;DR

“Computer use” became a real product surface area: shipping agents now touch desktops, files, and workflows (not just chat).

Integration is consolidating around MCP-style connectors: design systems and tool access are becoming governance problems.

Synthetic media moved from “content” to “infrastructure”: watermarking, identity consistency, and provenance are now operational.

THIS MONTH’S SIGNALS

Desktop execution is now a default capability, not a novelty: the competitive edge shifts to permissions, review gates, and auditability.

AI literacy shows compounding returns: experienced users are measurably more successful, so training becomes a productivity lever rather than HR hygiene (Anthropic Economic Index, Mar 2026).

Model Context Protocol (MCP) is quietly becoming a standard. Definition: MCP is a common interface that lets models use tools and context safely via “servers”, reducing bespoke integrations (Figma on agents + MCP, Mar 24).

The “prompt layer” is now a crown jewel: system prompts + RAG configs + tool permissions are where attacks and failures will concentrate (Mckinsey’s Lilli (Reportedly) Hacked).

GEO is moving from marketing tactic to executive risk control. Definition: GEO (Generative Engine Optimisation) means structuring content so LLMs retrieve and repeat it accurately—facts, claims, disclaimers, provenance, and sources.

WHAT WE PUBLISHED

Theme: Agentic execution (chat → deliverables)

Claude Cowork vs Claude Dispatch vs OpenClaw (Mar 26) — a clean operating-model map for desktop agents.

Why it matters: prevents “agent adoption” without clear risk boundaries.GPT 5.4 to 5.5: what’s being said, what’s actually known, and why OpenAI still feels pressure to move fast (Mar 25) — shipped GPT‑5.4 vs GPT‑5.5 chatter (unconfirmed).

Why it matters: keeps roadmaps anchored to verified capability.

Theme: Governance, security, and maturity

The AI Glossary Update 2026 (Mar 24) — the vocabulary stack for agents, security, governance, and cost.

Why it matters: shared language speeds decisions and reduces misalignment.Mckinsey’s Lilli (Reportedly) Hacked (Mar 12) — the prompt layer as an enterprise weak point (claims require caution).

Why it matters: security shifts from models to systems and workflows.KPMG Maturity Gap (Mar 5) — agent ambition is outrunning organisational readiness.

Why it matters: execution discipline becomes the differentiator in 2026.

Theme: Distribution, GEO, and brand surfaces

Brand GPTs (Mar 19) — the GPT Store as a “behaviour store”, not an app store.

Why it matters: distribution shifts from apps to answers (and risks).iPhone 17e Intelligence (Mar 3) — on-device AI becomes procurement logic, not a feature.

Why it matters: AI moves from pilot to pocket-scale default access.

Theme: Society, trust, and the human edge

Interview: Maria Empis — Real Estate, Risk and the Generative AI Gap (Mar 25) — data quality + trust + judgement as competitive edge.

Why it matters: the bottleneck is governance-ready data, not model IQ.

Photo: Maria Empis by JLL Portugal

Interview: Adib Bamieh — The Future Looks a Lot Like the Past (Mar 2) — what humans are for in an agentic economy.

Why it matters: strategy needs a human value thesis, not just tooling.

Photo: Adib Bamieh

Your AI Slop Bores Me (Mar 10) — a cultural shift: abundance is cheap, “aliveness” is scarce.

Why it matters: taste and trust become the premium creative outputs.When did generative AI become popular? (Mar 17) — two adoption waves: viral 2022, utility 2023.

Why it matters: adoption follows distribution, not just capability.

HOT TOPICS: AI × CREATIVITY

Computer use becomes the new creative interface — why it matters: agents ship work, not text.

What changed this month: GPT‑5.4 launched with native computer-use and 1M context (Mar 5) and Claude added computer use + Dispatch (Mar 23), turning “assistants” into workflow actors.

Why leaders should care: once agents click and send, brand and operational mistakes scale faster than approvals.

Example/implication: designate one “safe” workflow (e.g., weekly briefing pack) where an agent can draft—but must request approval before any external action.

Prompt-layer security becomes board-level — why it matters: prompts are now operational IP.

What changed this month: agent platforms make system prompts, tool permissions, and RAG configurations the new control plane (Lilli prompt-layer framing).

Why leaders should care: “we secured the model” is not the same as “we secured the system”.

Example/implication: treat system prompts like secrets—version them, restrict access, and log every change.

MCP turns into a governance decision — why it matters: integrations stop being bespoke glue.

What changed this month: Figma expanded agent access via MCP so clients like Codex and Claude Code can operate with design context (Agents meet the Figma canvas).

Why leaders should care: “which tools can the agent touch?” becomes an executive control question, not an engineering footnote.

Example/implication: create an MCP allow-list (approved servers, scopes, data permissions) and enforce it centrally.

Synthetic media enters the identity + provenance phase — why it matters: trust becomes infrastructure.

What changed this month: “synthetic media” (content generated or materially altered by AI) is being shipped with stronger provenance signals—e.g. watermarked audio in Gemini 3.1 Flash Live (Mar 26), plus identity-preserving image workflows highlighted in Phota Studio.

Why leaders should care: identity-consistent generation boosts creative speed and fraud risk simultaneously.

Example/implication: require explicit consent for identity use, and store provenance logs (inputs, prompts, approvals) for anything public.

Learning curves become a measurable advantage — why it matters: AI literacy compounds like a flywheel.

What changed this month: Anthropic reports higher-tenure users show materially higher success rates (Economic Index: Learning curves).

Why leaders should care: talent strategy becomes “who can run agentic workflows reliably”, not “who has access to a model”.

Example/implication: measure “successful workflow completions per week”, not “prompt usage”.

MODELS & TOOLS TO WATCH

GPT‑5.4 (frontier, computer-use, 1M context)

Why it matters: brings agent execution into mainstream professional work.

One-line description: OpenAI’s new flagship for knowledge work, tools, and computer-use agents (launch post).

Best-fit use case: workflow-heavy deliverables (docs, spreadsheets, presentations) with toolchains.

Risk/limitation: cost, evaluation overhead, and “wrong-but-confident” outputs without review.

GPT‑5.4 mini + nano (small models for subagents)

Why it matters: makes multi-agent systems economically viable at scale.

One-line description: fast, efficient models designed for high-volume workloads and subagent execution (release).

Best-fit use case: classification, extraction, routing, and background tasks under a larger planner model.

Risk/limitation: smaller models still need guardrails, tests, and monitoring.

Claude Cowork + Dispatch (desktop agent workspace + remote tasking)

Why it matters: shifts agents from chat to desktop labour.

One-line description: Claude can point, click, and complete tasks on macOS in research preview, with Dispatch to assign work from your phone (Anthropic post).

Best-fit use case: document-heavy internal workflows with explicit approvals.

Risk/limitation: “computer use is still early”; avoid sensitive data and high-blast-radius apps.

Gemini 3.1 Flash Live (real-time voice model)

Why it matters: makes voice-first creative workflows feel responsive.

One-line description: low-latency audio model for natural real-time dialogue and task execution (Google overview).

Best-fit use case: voice agents for customer experience, live brainstorming, and assistive workflows.

Risk/limitation: real-time systems compress the distance between error and impact.

Figma MCP server + agent canvas access (design-system-aware agents)

Why it matters: connects design intent directly into code generation.

One-line description: agents can operate on live Figma files using MCP and

use_figma, carrying design-system context (Figma post).Best-fit use case: accelerating UI component work while staying on-brand.

Risk/limitation: design access is sensitive; permissioning and change control are mandatory.

Voxtral TTS (open-weight multilingual text-to-speech)

Why it matters: audio becomes a controllable creative interface.

One-line description: a low-latency, multilingual TTS model positioned for scalable voice agents (Mistral announcement).

Best-fit use case: brand-safe voice generation with enterprise control options.

Risk/limitation: voice output heightens impersonation and disclosure requirements.

WHAT TO DO NEXT

Stand up an “Agent Readiness” pilot in 30 days: pick one creative workflow, define KPIs (cost/time/quality/trust), and enforce one approval gate before any external action.

Publish your GEO baseline in one page: canonical product facts, claims, disclaimers, and provenance rules so assistants can retrieve and repeat them accurately.

Treat prompts + connectors as regulated assets: create an MCP allow-list, version-controlled system prompts, and logging for every tool permission change.

CURIOSITIES

🧩 YourAISlopBoresMe went viral by flipping the script: humans “LARP as the AI” to earn credits, a neat indicator that the web is already pricing “aliveness” above abundance.

An Agent Contacted Me featured an agent pitching an agent-run art gallery—less “AI art”, more “AI organisation”.

Image: Vessel — “Ghost Evolving” (Generative HTML, Game-of-Life variants). A recurring motif: “the ghost in my own machine.”

Gemini 3.1 Flash Live watermarks generated audio, signalling that provenance is becoming product plumbing, not PR.

Also read:

Our February AI & Creativity Monthly Brief about January 2026

Our March AI & Creativity Monthly Brief about February 2026

Also, remember our 2026 top articles so far:

OpenAI Frontier: the enterprise agent platform that changes the competitive map; and why Google slid 7%+ — Frontier as an “operating layer” for agentic work. Why it matters: governance moves from policy docs to platform controls.

Moltbook: How the AI-Agent Social Network Is Rewriting Digital Trust, Security, and Competitive Advantage — “Agent-native” social dynamics (identity, reputation, risk). Why it matters: trust becomes an input to distribution, not only compliance.

Agent Internet: How Autonomous AI Is Building an Economy Without Humans — Early patterns of agent-to-agent trade and coordination (still experimental). Why it matters: markets may gain non-human participants with real agency.

AI in Your Toaster: PicoClaw — A “thin” runtime that brings assistants closer to edge devices. Why it matters: Agent sprawl becomes a security and cost governance problem.

AI Tokenomics: Which model is best? — Model choice reframed as measurable spend + quality engineering. Why it matters: tokens become a budget line, not a technical footnote.

What Matt Shumer’s Viral AI Article Really Means for Jobs, Leaders and Creators — Viral “step-change” narratives, plus grounded takeaways for role redesign. Why it matters: leadership choices determine whether disruption becomes an advantage.

Moltbot (formerly Clawdbot): The Self-Hosted AI Assistant “That Actually Does Things” — local automation with tangible outputs.

Why it matters: “agent ROI” becomes observable, not assumed.

ChatGPT 5.3: what’s being said, what’s actually known, and why OpenAI might feel pressure to move fast — rumours vs verified facts.

Why it matters: planning cycles must withstand model ambiguity.

7 AI Predictions for 2026: From Creative Machines to Real Economic Impact — grounded 2026 calls.

Why it matters: strategy needs probabilistic bets, not narratives.

Stop Chasing AI Detectors like Quillbot and Humaniser — authenticity anxiety and control dynamics.

Why it matters: trust is now part of the creative stack.

The AI Layoffs: What’s Really Happening — separating headlines from drivers.

Why it matters: workforce planning needs causality, not fear.

by Gonçalo Perdigão

Building Creative Machines covers AI, creativity, and society — articles, interviews, and open sketches.