GPT 5.4 to 5.5: what’s being said, what’s actually known, and why OpenAI still feels pressure to move fast

GPT-5.4 didn’t just upgrade ChatGPT. It rewired the product around tools, long context, and real deliverables at scale today globally.

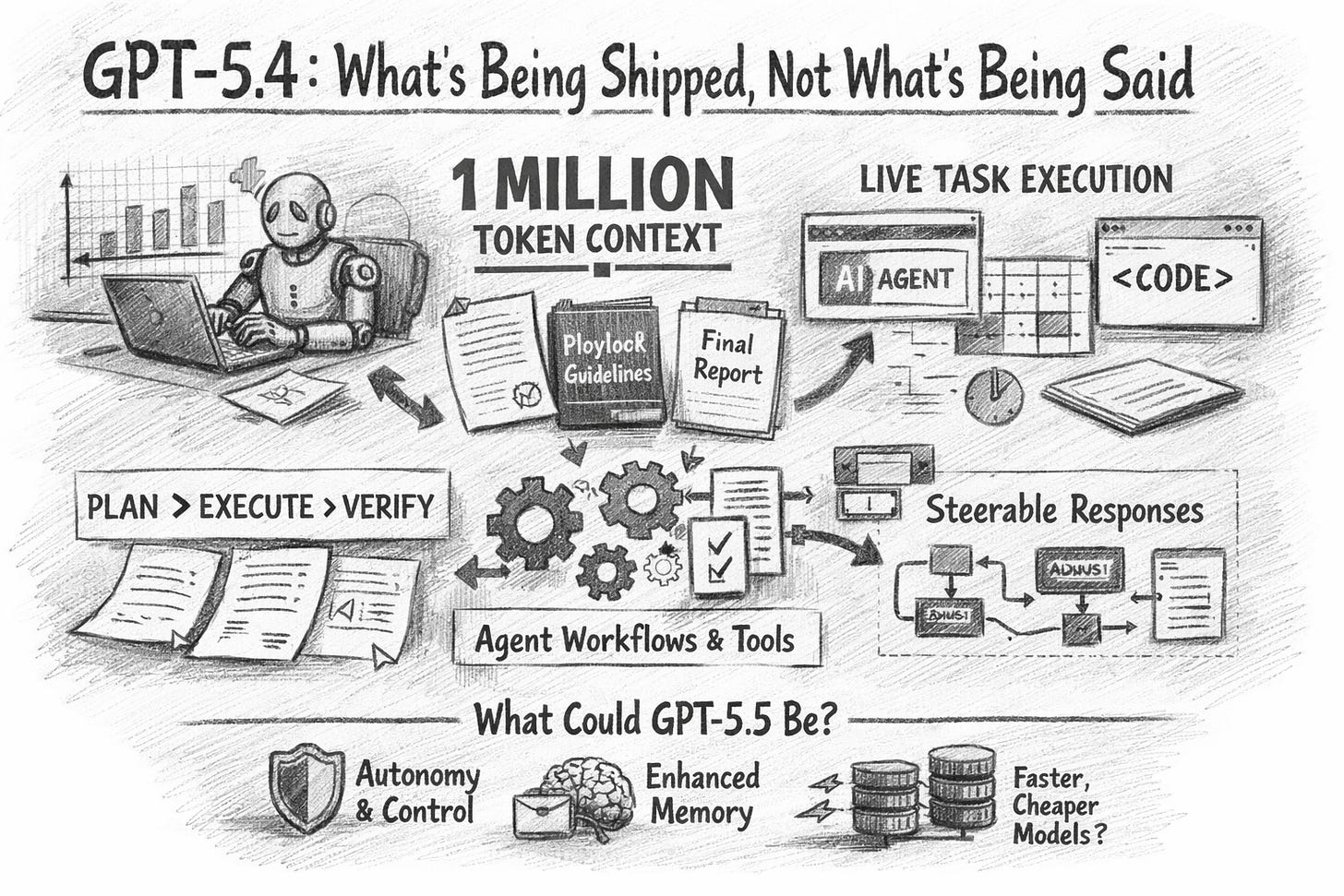

GPT-5.4: what’s being shipped, not what’s being said

When I wrote the 5.3 piece, the central argument was simple: ignore vibes, separate signals, and assume the next point-release would land where the competitive pressure is hottest—tools, reliability, long context, and “agent-ish” behaviour.

GPT-5.4 is OpenAI making that bet explicit.

Not with a single “wow” feature. With a product shape that quietly changes what “using ChatGPT” even means: less chat, more work execution.

OpenAI’s own description is unusually direct: GPT-5.4 bundles reasoning, coding, and agentic workflows into one frontier model, improves its performance across tools and software environments, and is built to produce professional artefacts (documents, spreadsheets, presentations) with less back-and-forth.

That last part matters. The model story is now inseparable from the workflow story.

1) The two headline capabilities that actually move markets

A. Native computer use (not “tool calling” as theatre)

OpenAI frames GPT-5.4 as its first general-purpose model with native computer-use capabilities, designed for agents that operate across applications.

Translation: it’s not just “I can call an API”. It’s “I can execute steps in a software environment in a way that looks like work”.

If you run operations, finance, sales ops, legal ops, analytics, or any function that lives inside a browser + spreadsheets + internal tools, this is the pivot.

B. 1M token context (the real reason ‘point releases’ matter)

In the 5.3 article, “bigger context” was a staple of rumour. GPT-5.4 makes it official: up to 1M tokens.

This isn’t a “read bigger PDFs” flex. It’s what enables long-horizon planning + execution + verification without the model collapsing into amnesia midstream. In practice, it’s the difference between:

summarising a contract pack

andrunning a contract workflow (extract clauses → compare to playbook → flag deviations → draft redlines → produce a memo)

2) The quiet feature: steerability while the model is “thinking”

OpenAI also slipped in a product behaviour change that sounds small until you use it for real work: GPT-5.4 Thinking can give an upfront plan, then you can adjust direction mid-response, then it finalises.

This is not cosmetic.

It turns the model into something closer to:

a junior analyst who shows you the approach before burning hours, and

a workflow engine you can steer before it commits to the wrong path.

This is exactly the kind of friction reduction that makes adoption explode inside organisations: less “prompt craft”, more “review and steer”.

What my 5.3 piece got right (and what it didn’t)

Let’s treat this like an investment memo: what was confirmed, what was noise, and what changed between 5.3 and 5.4.

1) Confirmed: the “most realistic 5.3 scenario” was basically the roadmap

In the 5.3 article, the grounded expectation was a refinement release pushing further on:

reliability / fewer hallucinations

long-context performance

sturdier tool calling / agent management

coding quality and UI generation

GPT-5.4 is exactly that direction—just more aggressive than the rumour framing suggested.

OpenAI says it specifically focused on improving spreadsheet, presentation, and document creation, as well as agent workflows and tool use.

So the call that mattered wasn’t “Garlic” or “leaked benchmarks”.

It was the strategic logic: the battleground is productivity, and productivity is tools + context + reliability.

2) Partly confirmed: “agents” weren’t marketing. OpenAI productised them

In January, “agent behaviour” was a vague online obsession. GPT-5.4 makes it concrete:

agentic workflows are part of the core positioning

computer use is shipped as a native capability

context is extended to support long-horizon execution

That’s not an incremental feature. That’s a product thesis.

3) Not confirmed (or simply irrelevant now): the rumour scaffolding

The 5.3 article was blunt about the quality of the evidence: social posts aren’t validation, secondary outlets can amplify the same unverified claims, and the sensible stance is to watch official docs.

That part aged well.

What didn’t matter in the end:

codenames and screenshot archaeology

“it’s definitely coming next week” certainty

speculative feature lists presented as inevitabilities

GPT-5.4 didn’t arrive as “the thing the rumour mill promised”.

It arrived as the thing the business pressure demanded.

4) Where 5.3 fits, now that 5.4 exists

OpenAI now clearly positions GPT-5.3 Instant as the fast everyday model, with GPT-5.4 Thinking/Pro as the deeper reasoning options.

So 5.3 didn’t become the dramatic “new flagship moment” the internet wanted.

It became the routing layer’s dependable workhorse.

And that’s an important signal for what comes next: the model family is becoming a portfolio, not a single crown.

The practical shift: GPT-5.4 is an operating model, not a chatbot

Here’s the useful way to think about GPT-5.4 if you’re responsible for outcomes:

Old question: “Is the model smarter?”

New question: “Can it complete a workflow with fewer human turns?”

GPT-5.4’s value shows up when you stop asking for answers and start asking for deliverables.

Three workflow patterns that suddenly work (and how to use them)

1) “Plan → Execute → Verify” as a default prompt structure

Use this when the cost of a mistake is high.

Prompt shape:

Goal (one sentence)

Constraints (budget, timeline, policies, risk limits)

Artefact required (deck outline, spreadsheet structure, memo format)

Verification step (what should be checked, and against what)

Why it works now: the model can hold the plan, keep track of what it’s done, and produce cleaner outputs with less fluff.

2) “Tool-first” tasks: treat ChatGPT as a coordinator

If you have web research, internal docs, a spreadsheet, and a slide deck, don’t do sequential prompting.

Ask for:

the tool sequence

the intermediate artefacts

a final compiled deliverable

The “agentic” win is not autonomy. It’s coordination.

3) “Long-context compression”: one ingestion, many outputs

With a 1M token ceiling, the game becomes:

ingest once

produce multiple views for different stakeholders

Example:

Board summary (1 page)

Risk register (table)

KPI dashboard spec (bullets)

Draft email to customers (tone-controlled)

This is where the ROI shows up: not in a single answer, but in removing the repeated context-setting that kills productivity.

So what could GPT-5.5 be?

Now the fun (and the dangerous) part.

There is no official announcement of GPT-5.5 in the sources that matter. So this section is not “what is”. It’s “what would make sense”, plus “what signals to watch”, plus “what rumours are even worth your attention”.

1) The most plausible 5.5 isn’t “smarter” — it’s more operational

If 5.4 is the “professional work” model, 5.5 would likely push three levers:

A. Better autonomy with tighter controls

Computer use is powerful, but organisations need:

permissioning

audit trails

sandboxing

human approval gates

A 5.5 that doesn’t ship stronger governance would be commercially incomplete.

B. Memory that is legible and controllable

“Memory” has been a persistent rumour topic because it’s the unlock for continuity. But enterprise adoption demands:

explicit scopes (“remember for this project only”)

retention windows

compliance-friendly export/erase

explainable “why I remembered this”

If 5.5 arrives, watch for memory framed as a control surface, not magic.

C. Cost/latency breakthroughs via a multi-model stack

5.4 already points to a portfolio approach (fast Instant, deeper Thinking/Pro). 5.5 could formalise:

background sub-agents

specialised mini/nano routing

parallel execution with a single final synthesis

The win would be speed + cost without losing reliability.

2) What rumours would actually be worth listening to?

Most rumours are content. A few are signals.

The rumours that tend to precede real releases are boring:

model names appearing in official docs, SDKs, or help centre language

changes in product UI that imply new routing behaviour

new evaluation frameworks, system cards, or safety notes that look “pre-release”

Anything else is usually just someone selling certainty.

3) My working hypothesis

If GPT-5.4 is “agents that do knowledge work”, then GPT-5.5, if it comes, will be “agents that do knowledge work safely inside organisations”.

Not a spectacle model. A deployment model.

A request: send me the useful rumours

If you’ve seen credible signals of a “5.5” (screenshots of official docs, SDK references, product UI changes, or enterprise rollout notes), send them.

Not hot takes. Not “my friend said”. The boring evidence.

Because the pattern is clear now: the story isn’t model IQ.

Its workflows are becoming software.

For something really futuristic, try out our GPT-7 approach