What Matt Shumer’s Viral AI Article Really Means for Jobs, Leaders and Creators

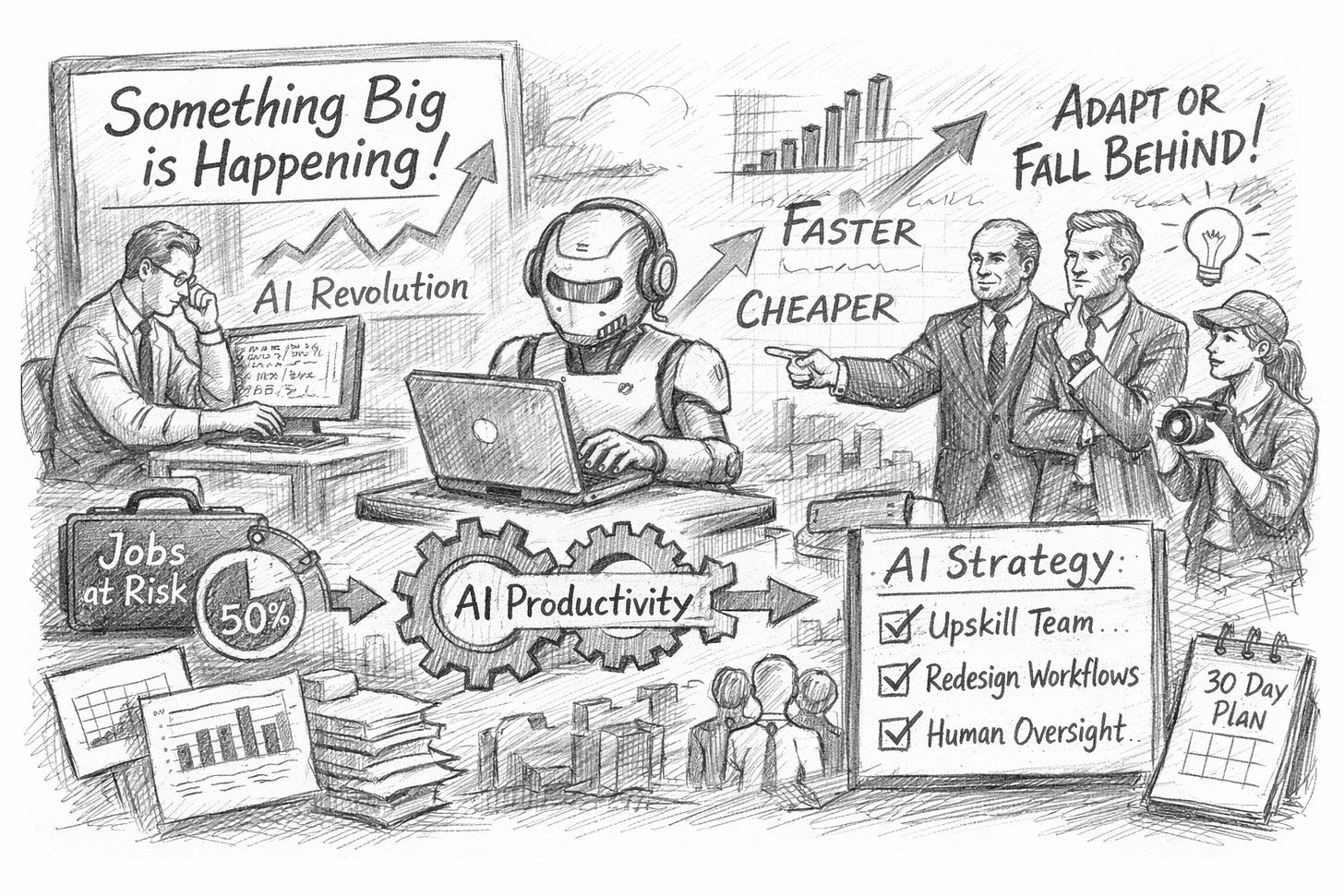

Matt Shumer’s viral AI essay claims a step-change is here, threatening screen-based jobs and rewarding fast adopters today already globally.

What happened (the short version)

Matt Shumer (HyperWrite/OthersideAI) published an essay called “Something Big Is Happening”. It spread quickly on X/LinkedIn and was widely republished and discussed in major outlets.

His claim: new AI models released around 5 February 2026 felt like a sharp jump forward, enough to change office work sooner than most people expect. (source: matt shumer)

What Shumer actually argued

He’s not saying “AI is good at writing emails”. He’s saying:

This is a turning point. If you haven’t tried recent tools, you’re underestimating what they can now do.

“Screen jobs” are first in line. If your work is mainly on a computer (docs, slides, code, analysis), AI can do big chunks of it. (source: www.ndtv.com)

The shock will be organisational, not technical. Firms that redesign work around AI will move faster and cheaper than firms that don’t. (source: Inc.com)

He used AI to help write the essay. He says that fact supports his point: the tools are already “co-worker” level for many tasks. (source: Business Insider)

Why did it hit a nerve?

Because it’s a familiar pattern: a technology improves quietly, then suddenly feels obvious — and anyone who ignored it looks slow.

Shumer also used a COVID-style analogy (“people will be blindsided”), which made it more shareable and more divisive.

The strongest, most useful takeaway

Whether or not you buy the “bigger than COVID” framing, the practical message is solid:

AI is now a productivity multiplier. If your competitors use it properly, they can ship more work, faster, with fewer people.

That’s not a future trend. It’s a management decision.

Where critics think Shumer overreached

Several serious voices pushed back on the certainty and timelines:

Reliability is still the problem. Models can be brilliant and wrong in the same breath, so “replace humans” is often “replace parts of the workflow with heavy oversight”. (source: garymarcus.substack.com)

Not all knowledge work looks like software work. Some roles have messy context, accountability, regulation, and human trust built in. (source: Fortune)

Net: disruption is real, but job outcomes will vary by domain, and leadership choices will matter.

What to do next

For CEOs & C-level leaders: 7 no-nonsense moves

Pick 3 workflows, not 30 tools. Target areas where speed matters (sales proposals, customer support triage, finance reporting, code delivery).

Measure cycle time. If AI doesn’t cut turnaround time, it’s theatre.

Create an “AI operating model”. Who approves outputs? What’s logged? What can be automated? What must stay human?

Build a small “AI strike team”. 1–2 operators + a security/legal partner. Give them permission to break the old process.

Protect data properly. Assume staff will paste sensitive text into tools unless you set rules and provide safer options.

Train managers first. Most AI value dies in middle management because people don’t change how work flows.

Plan for role redesign, not layoffs on a slide. The winners will redeploy talent to higher-leverage work while competitors freeze.

For creators (writers, designers, marketers, video, solo founders)

Use AI to compress the boring parts: research briefs, outlines, first drafts, variations, repurposing, metadata, and angles.

Keep humans on judgment: taste, voice, story, brand risk, and whatnot to publish.

Build a repeatable pipeline: prompt library + templates + checklists. Your advantage is process, not novelty.

For everyone: the 30-day checklist

Week 1: audit your job/tasks — list what’s “screen-only” vs what needs real-world context.

Week 2: standardise 5 prompts that save you time every week.

Week 3: automate one handoff (brief → draft, notes → summary, raw data → narrative).

Week 4: set quality gates (fact checks, sources, human sign-off) so speed doesn’t become reputational damage.

Matt Shumer’s article went viral because it voiced a fear many people already have: office work is being rewritten.

You don’t need to agree with every prediction to act intelligently: adopt AI, redesign workflows, and keep humans in charge of judgment and accountability.