The junior cliff: two new signals that A.I. is hollowing out entry-level hiring

Executives have suspected it for months; the data is now catching up. Two independent releases this week point in the same direction: firms adopting generative A.I. are cutting back on junior hires while leaving senior headcount largely intact.

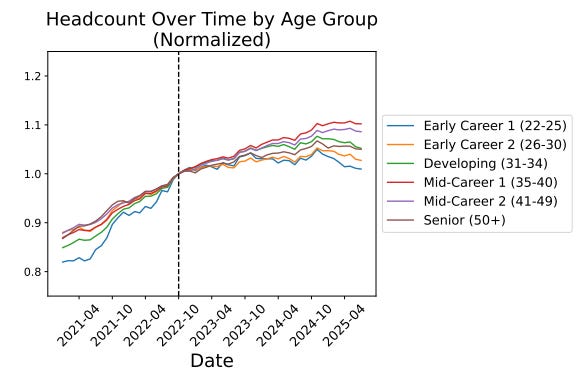

The first is a large-scale payroll study from Stanford’s Digital Economy Lab. Using monthly records on millions of U.S. workers since late 2022, the authors show that early-career workers aged twenty-two to twenty-five in the most A.I.-exposed occupations experienced a 13% relative decline in employment—even after controlling for firm-level shocks. Older workers in the same occupations grew six to nine per cent over the period. The adjustment is happening through jobs, not pay, and is concentrated where A.I. automates rather than augments work. (paper: Stanford Digital Economy Lab)

Figure: From Stanford Digital Economy Lab

The second is a firm-level working paper, flagged by the authors this week, that links LinkedIn résumé and job-posting data across approximately 285,000 U.S. firms from 2015 to 2025. The headline: companies that have undertaken at least one A.I. project hired fewer juniors than otherwise-similar firms, with no meaningful reduction in senior hiring. In other words, adoption appears to compress the bottom of the pyramid while preserving experience at the top. Although this study is new and still in working-paper status, the direction of travel aligns with the payroll evidence. (paper: X (formerly Twitter))

Why it matters

This is a structural—not cyclical—shift in how knowledge work is organised. Generative A.I. substitutes for the low-variance, well-specified tasks that historically justified entry-level roles: first drafts, unit tests, QA passes, reconciliations, and summarisation. Seniors absorb orchestration and exception handling, and the machine takes on the rote work that trained juniors perform. The upshot is a pipeline risk: fewer rungs on the ladder now means fewer experienced operators later. That risk is already macro-visible; the World Economic Forum’s 2025 survey finds that forty per cent of employers expect reductions where A.I. can automate tasks, even as other roles are created. (World Economic Forum)

For boards and investors, the strategic question is not whether A.I. raises productivity—it does—but where the productivity dividend lands. The early evidence suggests firms are cashing in by trimming junior positions rather than by scaling teams to capture demand. That can look rational quarter to quarter and still be value-destructive over a five-year horizon if capability development stalls.

What sophisticated operators will do next?

1) Build intentional “apprenticeship loops.” The Stanford paper’s nuance is critical: declines concentrate where A.I. automates; occupations where A.I. augments show entry-level growth. Treat this as a design variable. Redesign workflows so juniors own clearly scoped judgment work with A.I. as a copilot, not a black box. Make augmentation a KPI. (Stanford Digital Economy Lab)

2) Shift from headcount substitutes to capability multipliers. If a senior plus A.I. replaces three juniors today, that creates fragility. Counter by pairing each senior with rotating early-career analysts who document, evaluate and stress-test model outputs against ground truth. This keeps error-detection socialised and builds tacit knowledge that tools can’t encode.

3) Change how you measure talent formation. Track “time-to-autonomy” for new hires, not just vacancy fill times. Request a quarterly report on junior staff's exposure to live production decisions. If that metric worsens as A.I. adoption rises, you are quietly liquidating your future.

4) Rewire hiring signals. If entry-level requisitions fall, your campus pipeline will atrophy. Partner with external fellowships and apprenticeships to keep options open; insist on evaluated A.I. portfolios over tenure proxies. The Bureau of Labor Statistics (BLS) has already begun integrating A.I. into its projection frameworks—firms should mirror that forward-looking stance in workforce planning. (Bureau of Labor Statistics)

5) Incentivise augmentation in procurement. Tie internal chargebacks or capital approvals to proof that teams have shifted task mix toward human-in-the-loop augmentation (e.g., code reviews, reconciliations, escalation criteria), not pure automation.

Policy that actually bites

If A.I. is compressing the bottom rung, policy should target training externalities. Tax credits tied to verifiable junior exposure to real tasks would be more effective than generic R&D credits. Public-private apprenticeships should be jointly governed by industry and institutions, with competency rubrics aligned to actual A.I. workflows (prompting standards, evaluation, data governance). Regulators can also tilt incentives by recognising augmentation in safety-critical domains (such as finance and health) and by auditing the “duty to train” alongside the “duty of care.”

The read for capital allocators

This is not a blanket “A.I. kills jobs” story. It is a composition story. The near-term winners will present operating leverage from A.I. while maintaining a credible bench strategy. Watch for disclosures that separate junior and senior hiring, report augmentation ratios by function, and quantify investments in apprenticeship. Firms that only show cost compression at the base without a plan to replenish skills are signalling future delivery risk.

The market is moving: two independent datasets, two methodologies, same direction. Leadership teams should assume the junior cliff is real—and design around it now.