The IVO Test: A Key to Assessing Generative AI for Business Solutions

Is Generative AI the Right Fit for Your Problem? The IVO Test Can Help You Decide

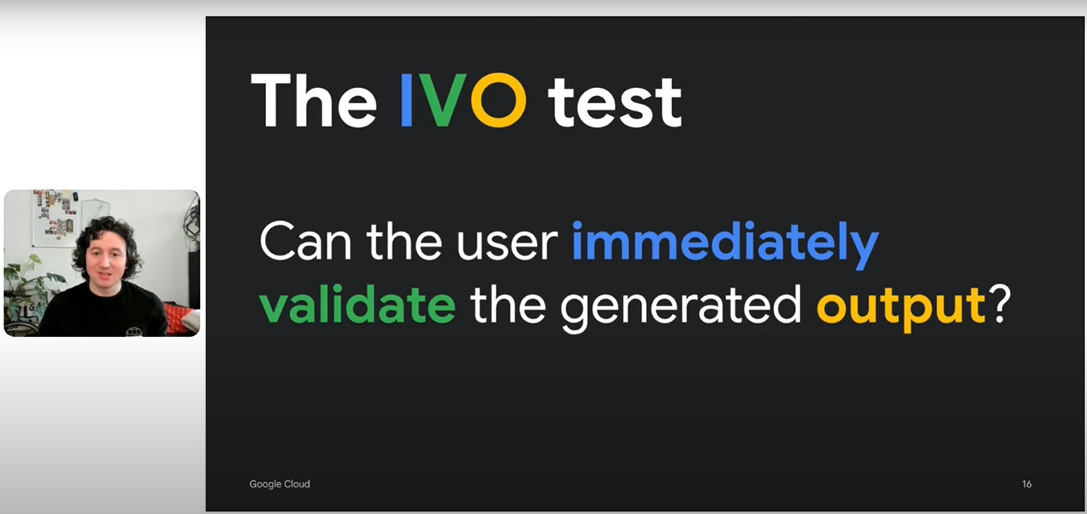

As generative AI becomes an increasingly popular tool across industries, its potential to solve complex problems and streamline processes is highly touted. Yet, the rapid adoption of AI technology has also highlighted a critical need for clarity: How do we determine if generative AI is the best fit for a specific problem? Enter the IVO Test (Immediate Validation Output Test)—a straightforward yet impactful method to gauge whether generative AI can produce the results needed for real-world tasks.

What is the IVO Test?

The IVO Test evaluates whether a human end-user immediately understands and verifies an AI-generated output. It aims to ensure that the outputs are relevant and actionable without requiring extensive interpretation or additional rounds of data processing. Developed to fill a gap in AI assessment tools, the IVO Test helps decision-makers determine if a generative model's results suit their business needs or if another approach would better suit the problem at hand.

In simpler terms, the IVO Test ensures that when an AI system generates results, those results are instantly usable, building trust and minimizing friction in AI-human collaboration. Unlike more technical evaluations focusing on model accuracy alone, the IVO Test considers user experience paramount, aligning technology output with the immediate requirements of business decision-makers and their teams.

Source: Concept introduced by Zack Akil from Google Cloud

Why the IVO Test is Relevant for Generative AI

Generative AI models are powerful but often complex. Businesses can invest significant resources into these tools only to discover that the outputs, while technically correct, are too abstract or ambiguous for quick decision-making. This usability gap creates a need for the IVO Test, allowing companies to validate whether the generated content will fulfil their needs without unnecessary adjustments or clarifications.

In many sectors, time is of the essence. Generative AI, therefore, must deliver not only high-quality but also highly relevant outputs that can be quickly validated. The IVO Test provides a streamlined evaluation framework, guiding businesses in:

Assessing the quality of AI outputs from a user-centric perspective.

Determining the interpretability and immediacy of AI-generated results.

Understanding the readiness of the tool for deployment within specific workflows.

The Business Case for the IVO Test

Incorporating the IVO Test can significantly benefit organizations seeking to integrate AI into their operations. Leaders can make more informed decisions about their tech investments by avoiding costly missteps and aligning AI outputs with business objectives. With generative AI applications in customer service, marketing, content generation, and more, the IVO Test helps ensure that teams receive outputs that make sense immediately, fostering a smoother integration of AI within daily operations.

Consider a scenario where a marketing team uses generative AI to create campaign assets. Without the IVO Test, they might face unclear or off-brand content that requires additional resources to adjust. With the IVO Test, however, the team could validate whether the AI-generated outputs align with their brand voice and can be used immediately, leading to faster campaign launches and improved efficiency.

Practical Tips for Implementing the IVO Test

Reframe Outputs for User Clarity: Encourage AI systems to present findings in terms that are directly relatable and easy to grasp, removing complex jargon that may confuse end users.

Pre-Output Grounding: AI should validate information against a well-defined knowledge base before generating outputs, ensuring accuracy and relevance within a real-world context.

Transparent User Interfaces: Understanding how an AI reached its conclusion can build trust for business users. A straightforward, user-friendly interface that reveals this decision path can improve user confidence and foster more robust adoption.

Moving Forward: Building Trust and Efficiency with the IVO Test

As generative AI continues to reshape industries, it’s critical to prioritize user-first design in AI applications. The IVO Test is a practical measure of usability and a means to promote responsible AI adoption. It aligns technology solutions with user needs by helping businesses choose AI models that provide immediate, reliable outputs, driving efficiency and trust in AI-powered workflows.

Integrating the IVO Test into your AI strategy could mean the difference between an unused AI tool and one that actively enhances productivity and decision-making. By ensuring generative AI aligns with immediate operational needs, leaders can foster more robust, more productive relationships between human teams and AI systems, paving the way for impactful innovation across all sectors.