Stop Chasing AI Detectors like Quillbot and Humanizer

Search trends show rising anxiety about AI, authenticity, and who is really in control.

This week’s fastest-rising searches are not about new models or breakthroughs.

They are about detection, rewriting, and legitimacy.

Tools and queries like Quillbot, AI Humanizer, Humanizer, Zero ChatGPT, ZeroGPT, ChatGPT Detector, alongside ChatGPT Plus, OpenAI, OpenAI ChatGPT, and even the ChatGPT website, are all climbing together.

That combination is not accidental. It signals a behavioural shift.

People are no longer asking only, “How do I use AI?”

They are asking, “How do I prove I didn’t, or make it look like I didn’t?”

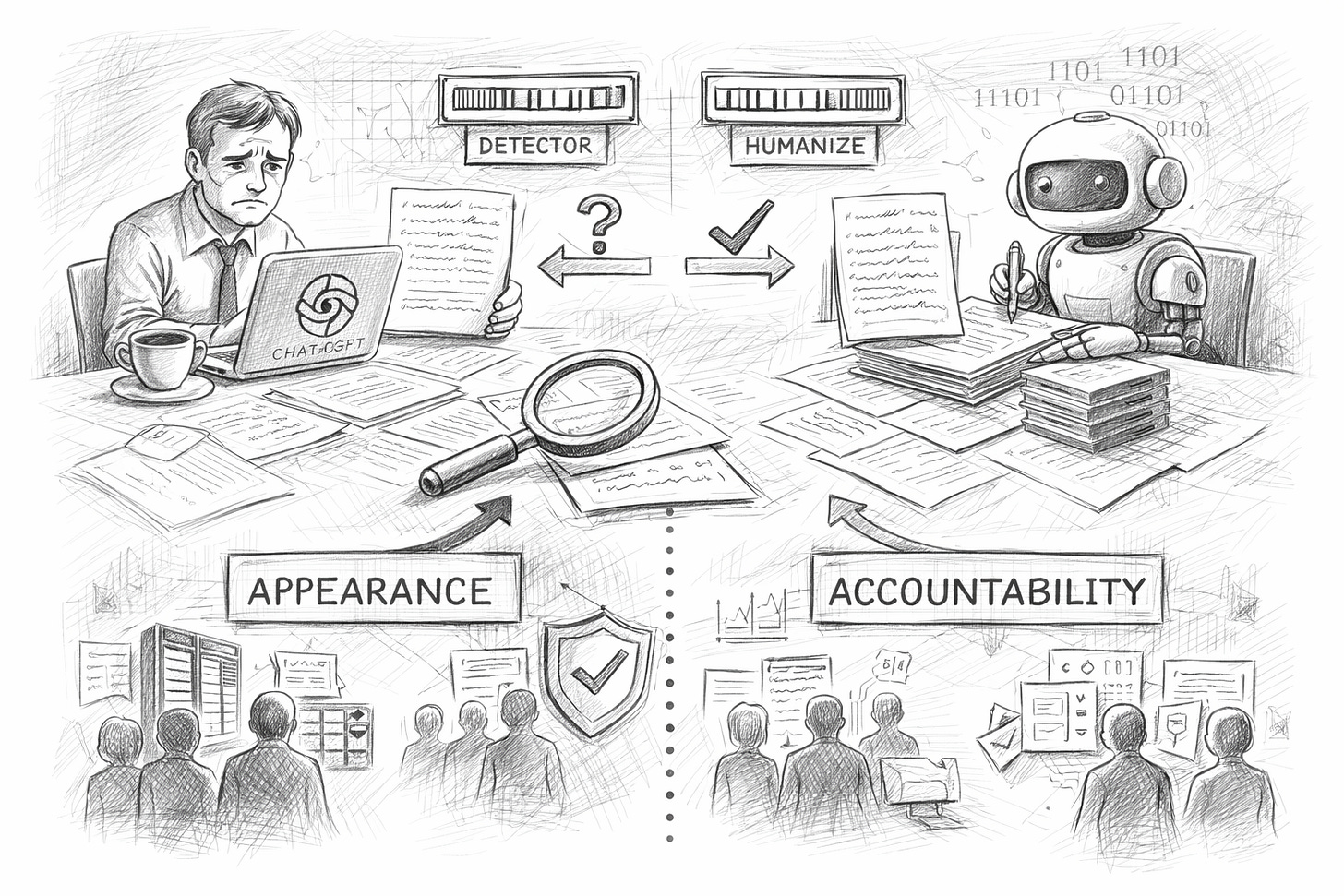

The rise of “humanizers” versus “detectors”

On the one hand, demand is growing for tools branded as AI humanizers or simply humanizers.

Their promise is straightforward: rewrite AI-generated text so it feels more natural, less patterned, less machine-like.

On the other side, searches for ChatGPT detectors, Zero ChatGPT, and ZeroGPT reflect institutions and platforms trying to identify whether content originated from models such as OpenAI ChatGPT.

This creates a predictable arms race:

Humanisers optimise for stylistic randomness.

Detectors optimise for statistical patterns.

Content quality becomes secondary to passing the test.

From a business perspective, this is not a sustainable equilibrium. It rewards surface optimisation, not reliability or truth.

Why rewriting tools are booming

Quillbot's growth is especially telling. Rewriting and paraphrasing are becoming default workflow steps, not exceptions.

Sometimes this is practical:

second-language writers improving fluency

teams standardising tone

marketers iterating drafts quickly

But it also normalises a culture in which authorship blurs and accountability weakens.

When everything is “assisted”, tracing intent and responsibility becomes harder — precisely when trust matters most.

Detection is the wrong question

Organisations are increasingly focused on whether a text was written by a human or by AI.

But that is rarely the business-critical question.

The real questions are:

Is this information correct?

Can it be audited?

Was it reviewed?

Do we understand the system that produced it?

A perfect ChatGPT detector would not solve governance, compliance, or reputational risk.

It would only label the source, not validate the outcome.

In regulated or high-stakes environments, detection is a weak proxy for safety.

Why is interest in OpenAI and ChatGPT Plus rising in parallel

At the same time as the trend toward detection tools, searches for OpenAI, ChatGPT Plus, OpenAI ChatGPT, and the official ChatGPT website are also increasing.

That reflects another dynamic: when uncertainty rises, people look for:

official sources

paid tiers with perceived stability

clearer capability boundaries

In enterprise contexts, this often leads to standardisation around fewer tools, tighter policies, and clearer ownership of AI usage.

Not because the tools are perfect, but because uncontrolled experimentation scales risk faster than it scales value.

What this means for strategy, not just content

This trend is not limited to education or marketing. It is showing up in every operational decision made by AI.

We are moving into a phase where AI is no longer an optional productivity layer.

It is becoming part of core workflows, customer interactions, and internal decision systems.

At that point, the challenge is not:

“Can we generate this?”

It is:

“Can we defend this, explain it, and correct it when it fails?”

Humanisers and detectors do not answer those questions.

System design, evaluation frameworks, and human oversight do.

The internet may be debating whether text is human or machine-written.

Organisations, however, are heading towards a more consequential divide:

teams optimising for appearance

versus teams building for accountability

The second group will move more slowly at first.

They will also make fewer catastrophic mistakes.

And in the long run, that is where trust — and competitive advantage — will concentrate.

AI adoption is no longer about writing faster.

It is about operating responsibly at scale.

This week’s trends are not a fad.

They are early warning signals of a governance problem the industry has not yet fully accepted.