S&P 500 AI Strategy

Enterprise AI is shifting from pilots to production. Winners build infrastructure, governance, and agent control, before chasing applications.

Enterprise AI has moved on.

For the last few years, most big companies treated AI as a set of experiments: a pilot here, a “copilot” feature there, a few integrations, a press release. That era is ending. The next era is harder: putting AI into real workflows, with real customers, under real regulation, with real consequences.

A recent CB Insights + Human[X] report makes this shift very clear. It tracks S&P 500 activity across partnerships, investments, acquisitions, hiring, and earnings calls from 2023 to 2025.

The headline is not “everyone is doing AI.” It’s “a few firms are shaping the whole market, and the rest are still building the basics.”

Download the report from CB Insights + Human[X] here:

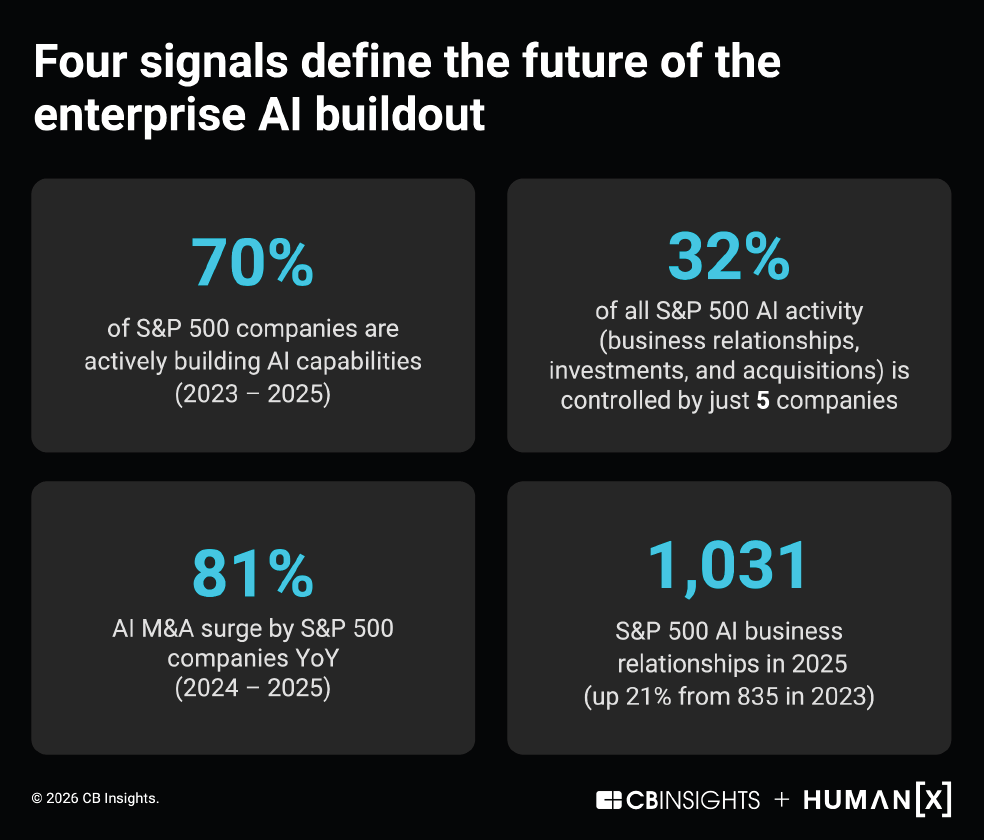

The market looks broad, but power is concentrated

The report finds that nearly 70% of the S&P 500 showed some external AI activity over the period. That sounds like a flood. But zoom in, and you see the real shape: five companies (NVIDIA, Microsoft, Amazon, Alphabet, Salesforce) account for 32% of all documented AI activity across relationships, investments, and acquisitions.

This matters because it tells you what “AI leadership” actually means in practice.

It is not just about using AI internally. It is controlling the ecosystem: distribution, compute, models, tooling, and the deal flow around them. In other words, some firms are building the rails everyone else will ride.

The report also notes something easy to miss: 30% of the S&P 500 had no documented external AI activity, yet many of them are still hiring heavily for AI roles and discussing AI on earnings calls. So the outside signals help you see visible leaders, but they do not capture the full internal buildout.

Simple takeaway: don’t confuse “no partnerships announced” with “no AI strategy.” But do assume that the companies shaping the ecosystem will compound advantage faster.

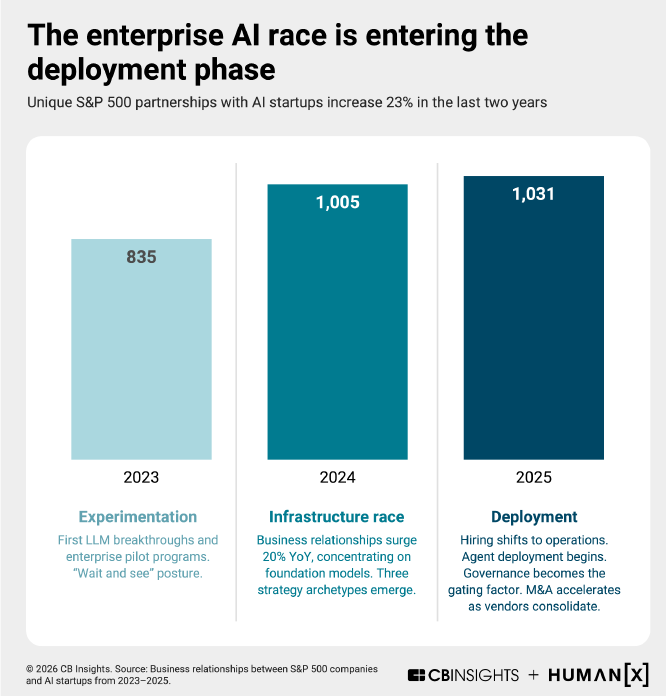

Partnerships are rising faster than revenue

One of the cleanest signals in the report is partnership growth. Startup partnerships involving S&P 500 companies rose from 835 in 2023 to 1,031 in 2025.

But most of these partnerships are not the kind your CFO loves.

Nearly 68% are “ecosystem-building” relationships: integrations, pilots, and co-marketing. Only about 12% are client relationships, and 12% are vendor relationships.

That mix tells you the market is still in an “alignment” phase. Companies are deciding:

Which platforms will they build around

Which tools will they standardise on

Which model providers do they trust

How they will connect AI to data, security, and operations

This also explains why partnership hubs matter. The report highlights NVIDIA, Microsoft, and Amazon as the main hubs — with NVIDIA’s advantage tied to its deep embed in the infrastructure layer, and Microsoft/Amazon extending reach through cloud distribution and enterprise access.

Simple takeaway: most partnerships today are not “go-to-market.” They’re “pick your stack.”

Money still flows to infrastructure, not apps

If you want to know what enterprises really believe, watch where they place capital.

The report shows enterprise investment concentrating in infrastructure and model-layer companies: Databricks, OpenAI, CoreWeave, Anthropic, xAI, Cohere, Scale, and Groq. Infrastructure/model plays account for over half of enterprise investment rounds.

It even draws a useful analogy: this pattern resembles the earlier cloud buildout, where money went first to the enabling layers, and applications scaled later.

This has a strategic implication that many boards still underestimate:

If your AI strategy is “buy applications,” you are arriving late to the part of the market where defensibility will sit. The durable bottlenecks are still compute, data plumbing, orchestration, evaluation, and governance.

Simple takeaway: in enterprise AI, “boring infrastructure” is the growth story.

The real shift: from capability to control

Here is the most important part of the report — and the part many companies are least prepared for.

As AI moves from assistive tools to more autonomous systems (agents), the question changes from:

“What can AI do?”

to:

“How do we control what it does?”

The report highlights rising interest in governance and agent-related markets, including the “Know Your Agent” (KYA) concept: identity, permissions, monitoring, and control for agents operating in production environments.

That framing lines up with where standards and best practices are going:

NIST’s AI Risk Management Framework gives a practical structure for identifying and managing AI risks across the lifecycle (not just at launch). (nist.gov)

ISO/IEC 42001 sets requirements for an AI management system, including risk assessment, impact assessment, lifecycle management, and supplier oversight. (ISO)

In plain terms, “AI governance” is becoming operational work, not policy work.

Simple takeaway: as agents grow, governance becomes part of the deployment stack, like security or finance controls.

A practical playbook for the next 12 months

If you strip away the hype, the report points to a very practical plan. Here is what tends to work in companies that are moving from pilots to production.

1) Treat AI like a production system, not a feature

Pilots fail because they stay “special.” Production wins when AI becomes normal IT.

Do three basic things:

Put AI behind the same release gates as software (testing, approvals, rollback plans)

Measure performance over time (drift, error rates, cost per task)

Assign an owner with operational responsibility (not just innovation responsibility)

2) Build your “agent perimeter”

If you are deploying agents (or plan to), you need a perimeter: identity, permissions, audit logs, and monitoring.

A simple starting checklist:

Unique identity for each agent (and for each tool it can use)

Least-privilege permissions (what it can access, change, spend)

Full traceability (what it saw, what it did, why it did it)

Continuous evaluation (before and after deployment)

This is exactly the kind of control layer the report is signalling with KYA and governance markets.

3) Standardise your stack before you scale spend

Partnerships are rising, but most are still ecosystem-building. That means many firms haven’t locked in their stack.

Your job is to reduce variance:

Choose a small set of approved model endpoints

Choose one orchestration pattern (so teams don’t invent ten)

Define how data is accessed (and what never leaves the boundary)

Decide how you observe systems (metrics, logs, red-teaming outputs)

The fastest way to burn money is to let every business unit buy its own AI universe.

4) Assume “infrastructure first” in your deal radar

The report shows capital and activity clustering at the infrastructure and model layers.

So if you are in corporate development or strategy, flip the usual scanning order:

Governance and control (audit, evaluation, permissions, monitoring)

Orchestration and workflow tooling

Data and inference infrastructure

Vertical applications (only once the above is stable)

This is where M&A may open up “below the biggest winners,” as the report suggests, especially in governance and infrastructure enablers.

5) Make ROI easier by starting with low-risk, high-volume work

The report’s “looking ahead” section is blunt: the bottleneck is shifting from access to AI to operationalising it inside real workflows.

So pick work that is:

Repeatable (high volume)

Observable (clear success metrics)

Contained (limited blast radius)

Easy to revert (humans can take over fast)

Examples: internal knowledge retrieval, ticket triage, document drafting with approval gates, forecasting support with human sign-off.

Insight

Many companies think the AI race is about picking the “best model.”

The report suggests something different: the next advantage comes from building the systems that make AI safe, governable, and scalable inside the enterprise, while everyone else is still announcing pilots.

Models will keep changing. The control plane is what will stick.

And that is why the enterprise AI buildout is becoming less like buying software, and more like building a modern operating system for the firm.

Key sources: CB Insights + Human[X] report on enterprise AI buildout (CB Insights), NIST AI RMF (nist.gov), ISO/IEC 42001 (ISO)

Read the latest AI & Creativity insights in our monthly briefing:

Stay part of the conversation, explore our updated 2026 AI Glossary: