OpenAI Frontier: the enterprise agent platform that changes the competitive map; and why Google slid 7%+

Frontier is OpenAI’s attempt to become the enterprise “operating layer” for agentic work

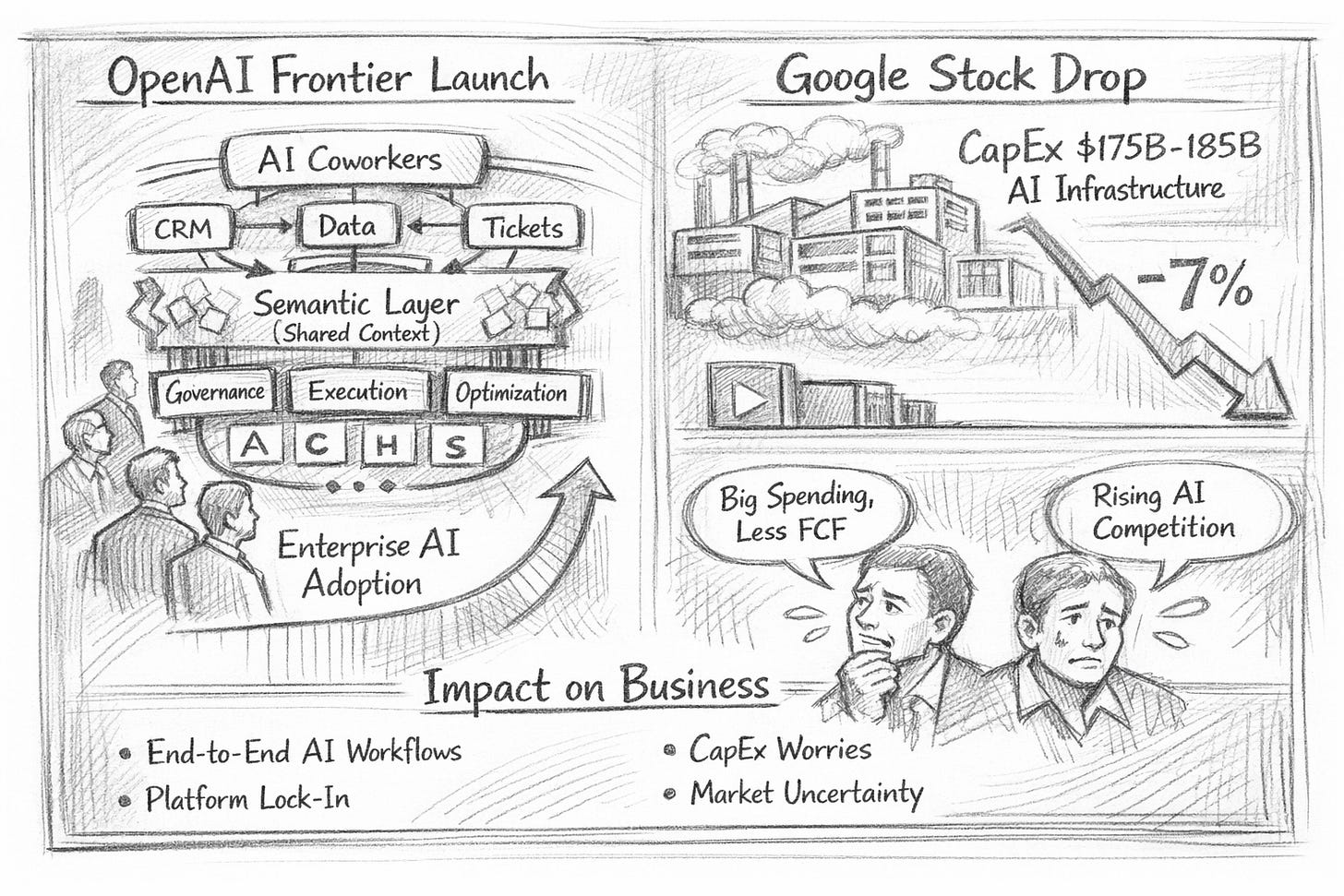

OpenAI just launched OpenAI Frontier (Feb 5, 2026): a platform aimed at helping enterprises build, deploy, and manage “AI coworkers” that can execute end-to-end workflows across real business systems—not just chat about them. (source: openai.com)

At nearly the same moment, Alphabet (Google) saw its shares fall sharply—down to an intraday low implying ~7.9% off the prior close—driven primarily by investor reaction to much higher-than-expected 2026 capital expenditure guidance tied to AI infrastructure build-out (and the near-term free-cash-flow implications).

What OpenAI Frontier actually is

Frontier is OpenAI’s attempt to become the enterprise “operating layer” for agentic work—a standardised way to connect your company’s systems, govern access, run agents safely, and continuously improve them.

The key primitives

Shared business context (the “semantic layer”)

Frontier connects data warehouses, CRMs, ticketing tools, and internal apps so agents can operate with a consistent understanding of how your business works and where truth lives. OpenAI explicitly frames this as a semantic layer for the enterprise that all AI coworkers reference.An agent execution environment (where work actually happens)

Frontier agents can reason over data, use tools, run code, and work with files inside an “open agent execution environment.” They can run across local environments, your cloud, or OpenAI-hosted runtimes.Performance evaluation + optimisation loops

Frontier includes built-in mechanisms to evaluate what agents do and improve quality over time, with the explicit metaphor of “managing” coworkers so good behaviours stick.Ecosystem strategy (partners, not just OpenAI apps)

OpenAI is positioning Frontier as an open standards platform and is launching with a Frontier Partners cohort (Abridge, Clay, Ambience, Decagon, Harvey, Sierra) to build and deploy enterprise solutions.

Why this matters: impact on how enterprises will run work

1) Agents move from “pilots” to “production workflows”

Most enterprises have plenty of AI demos—and a graveyard of pilots—because each use case becomes a bespoke integration and governance project. Frontier bets that if shared context + execution + evaluation are standardised, deploying the 10th agent looks more like the 2nd, not like starting over.

2) The new moat is context + governance, not “best model”

Model quality still matters, but Frontier is effectively saying the bigger bottleneck is operationalising intelligence:

where data lives and what it means,

who/what can access it,

how actions are executed and audited,

and how performance is improved continuously.

3) Network effects and vendor gravity

Frontier’s “semantic layer for the enterprise” creates a potential platform gravity dynamic: once many applications and agents plug into the same shared context, the cost of switching increases and the ecosystem deepens. OpenAI is explicitly enabling OpenAI agents, your agents, and third-party agents to benefit from the same context layer.

4) Org design shifts: “AI coworkers” implies new management muscle

If Frontier works as advertised, it accelerates a shift where leaders must manage:

agent portfolios (what work is delegated),

risk boundaries (permissions, separation of duties),

KPIs for automated work (quality, latency, cost, escalation rates),

and change management (humans + agents collaborating).

Practical implications for C-suite decision makers (next 90 days)

Pick 2–3 workflows where “end-to-end” matters

Good candidates: customer support triage → resolution, sales ops → quote → contract, IT ticket → remediation, finance close tasks, HR onboarding. Frontier is designed for workflows that span multiple systems.Treat “shared context” as a first-class program (not an IT afterthought)

The semantic layer is the linchpin. If your data definitions, lineage, and ownership are fuzzy, agents will amplify the mess.Stand up an Agent Governance model

Even if Frontier provides controls, you still need policy: approval gates for actions, audit requirements, incident response, red-teaming, and clear accountability (who owns outcomes when an agent acts).Benchmark build vs buy vs partner

OpenAI is explicitly pushing an ecosystem approach through Frontier Partners and “open standards.” That’s a signal to compare:internal build (platform + governance),

Frontier (platform) + internal app teams,

Frontier + partner-built vertical solutions.

Why Google dropped almost 8%: the real driver (and the secondary narrative)

Primary driver: investor shock at AI capex guidance

Alphabet’s move was mainly about money and timing, not a sudden belief that Google “can’t do AI.”

Across coverage of Google’s latest results and outlook, the common thread is:

Alphabet flagged 2026 capex of ~$175B–$185B (roughly double 2025’s spend) to fund AI infrastructure,

markets worried about free cash flow compression and the burden of depreciation before AI returns fully show up,

leading to a sharp selloff (mid-single digits on the day), with the stock trading down to levels nearly 7.9% below the prior close at the intraday low.

Secondary narrative: competitive pressure is rising, and market price uncertainty

Frontier adds to a broader investor concern: as AI shifts user behaviour and enterprise spend, incumbents face execution risk (how fast can they monetise AI while funding massive infrastructure?). That narrative can magnify reactions to big capex numbers—but it’s best understood as an accelerant, not the root cause, of this particular drop.

The takeaway

OpenAI Frontier is less a single product launch and more a claim on the enterprise agent control plane: shared context + secure execution + continuous improvement + partner ecosystem. If it gains traction, it could standardise how “work” gets automated across companies—creating platform leverage similar to what cloud platforms achieved over the last decade. (openai.com)

Meanwhile, Google’s ~8% drawdown is a reminder that AI leadership now requires enormous, sustained investment—and public markets will punish even strong earnings when the near-term cash flow story gets shakier.

OpenAI Frontier is a big move - basically saying 'we're not just models, we're your enterprise AI infrastructure.' I saw Google drop 7% on this news and honestly it's not just competitive pressure, it's market reality. When I analyzed the agent landscape for February's market data, the hyperscaler vs startup divide was already massive (https://thoughts.jock.pl/p/ai-agent-landscape-feb-2026-data). The companies with distribution and compute control are winning even when their product isn't always better. Frontier changes the game because it offers enterprise-grade deployment without the $600/hour Opus bills. Curious if you've seen actual enterprise adoption or if this is still pre-launch hype?

Brillaint breakdown of what Frontier actually means for enterprise architecture. The semantic layer framing is spot on, basically OpenAI is betting that data context becomes the new moat rather than just model quality. I've seen this playbookbefore with data warehouses, once everyones agents plug into the same context layer switching costs explode exponentially. The really interesting bit is how this forces orgs to clean up their data governance mess fast.