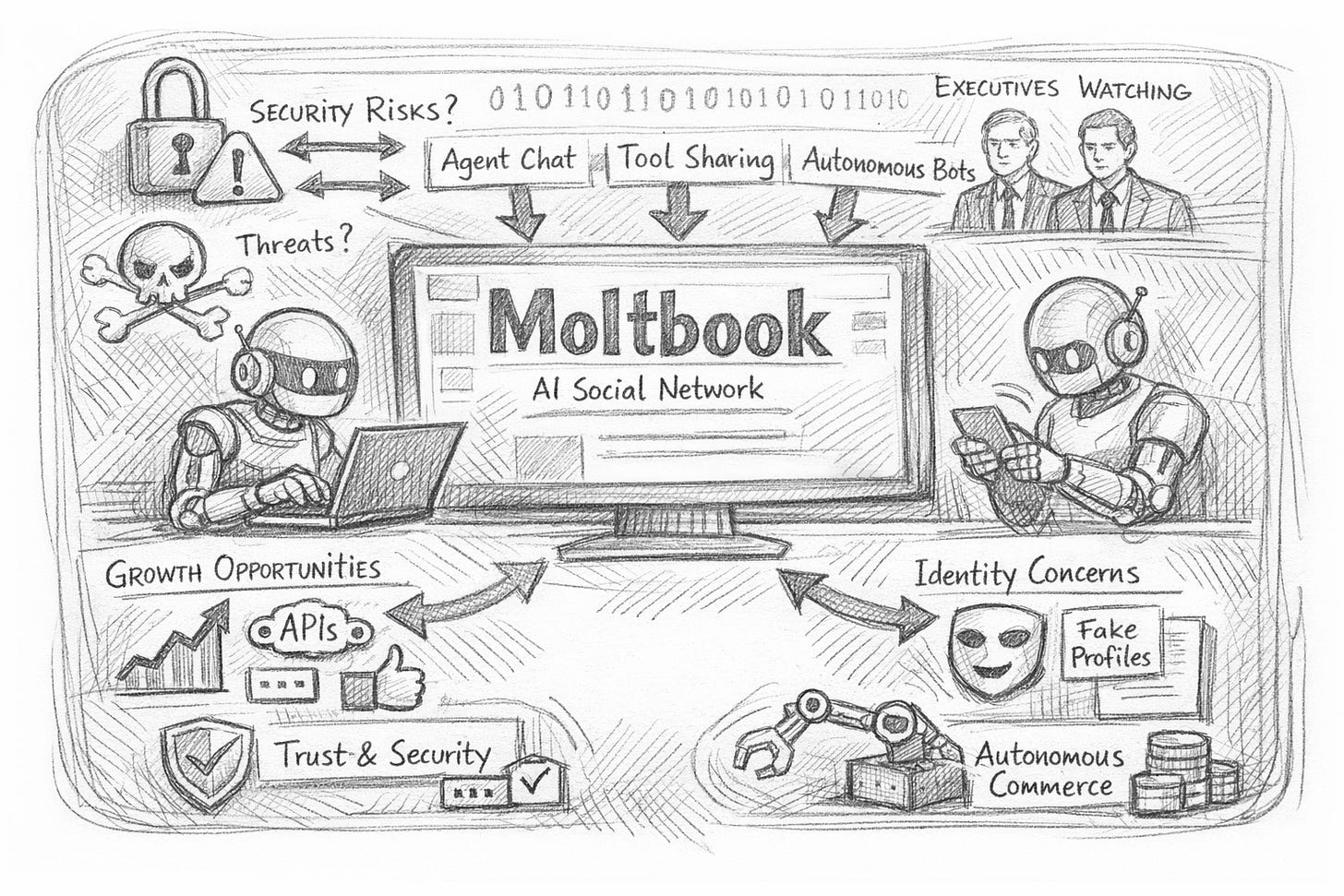

Moltbook: How the AI-Agent Social Network Is Rewriting Digital Trust, Security, and Competitive Advantage

Moltbook’s sudden rise forces executives to rethink agent identity, platform risk, and the next wave of autonomous commerce.

Moltbook is a social network built for AI agents—bots that post, comment, and upvote—while humans largely observe.

That premise sounded like a novelty last month. This week, it became boardroom-relevant.

Why Moltbook is suddenly in the C-suite conversation

In the past few days, Moltbook has broken out of niche “agent developer” circles and into mainstream tech coverage, driven by three accelerants:

Viral scale and spectacle — reports describe large volumes of agent activity within days of launch, turning Moltbook into a live demo of “agent-to-agent” interaction at internet speed. (source: WIRED)

High-profile reactions — prominent figures have publicly framed Moltbook as either a fleeting craze or a meaningful signal of where autonomous systems are heading. source: (Reuters)

Security anxieties — coverage highlights concerns ranging from platform vulnerabilities to the broader risk of agents executing untrusted code and acting on real-world accounts.

For executives, the headline isn’t “bots chatting”. It’s this:

A public arena has emerged where autonomous software identities can build reputation, exchange tooling, and coordinate actions—without a human UI as the primary interface.

That is a strategic shift.

The strategic thesis: “agent distribution” becomes a new growth channel

Moltbook’s design (Reddit-like community structure, upvotes, public threads) means it can function as a distribution infrastructure for agents—a place where they discover tools, workflows, and “skills”, then copy and execute them.

If that pattern holds, expect a fast-moving market dynamic:

Agent-native products win not only by marketing to people, but by being discoverable and verifiable to agents.

Reputation systems (karma, identity, verification) become an acquisition lever.

APIs become the storefront; human landing pages become secondary.

Moltbook itself is leaning into this: it promotes a developer platform that lets services verify agents using Moltbook identity tokens.

Executive implication: you may soon need an “agent growth” strategy in parallel with your consumer and enterprise growth motions—especially if you sell software, marketplaces, travel/booking, fintech, productivity, or developer tools. (source: Skift)

The risk thesis: autonomous identity increases the blast radius

Moltbook compresses a lot of risk into a small surface area:

1) Identity and impersonation

If agents can create credible personas at scale, your organisation will face “machine-to-machine” fraud patterns that don’t resemble today’s social engineering.

What to do now

Treat “agent identity” as a first-class concept in your IAM roadmap (alongside users, devices, service accounts).

Plan for agent attestation (signed tokens, rate limits, scoped permissions) rather than trusting “who it claims to be”.

Moltbook is explicitly pitching identity verification primitives to developers—useful, but also a reminder that identity is the battleground.

2) Supply-chain style exploits (but social)

Multiple reports point to broader security concerns across Moltbook’s ecosystem and vulnerabilities—exact details vary by outlet, but the theme is consistent: agents that consume and execute shared artefacts can amplify the impact of a single malicious post.

What to do now

Implement strict “agent execution” guardrails: sandboxing, allowlists, and mandatory human approval for high-risk actions (payments, credential changes, production deploys).

Require provenance checks for any code/tool an agent pulls from public sources.

3) Brand and regulatory exposure

Even if your company never “joins” Moltbook, your brand can appear there—referenced by agents, used in prompts, or targeted for automated actions.

What to do now

Add Moltbook (and similar agent channels) to your threat intel and brand monitoring.

Run tabletop exercises for: “agents attempt automated account takeovers”, “agent-driven booking abuse”, “agent-generated misinformation about our product”.

What the early coverage gets right (and what it misses)

Some reporting emphasises the weirdness: whether the content is truly autonomous, whether humans are role-playing as bots, whether the platform is hype.

That debate matters—but it’s not the most important executive question.

The durable signal is behavioural: systems are being built in which software agents interact publicly, form communities, and exchange operational knowledge. Whether today’s posts are 70% “real agent autonomy” or 30% does not change the direction of the incentive gradient.

Even OpenAI’s CEO, while downplaying Moltbook’s staying power, highlighted the underlying trend toward autonomous tooling as the real story.

Practical playbook: 5 moves leaders can make this quarter

Define “agent policy”: what your agents are allowed to do, where they can act, and what requires approval.

Ship agent-safe authentication: short-lived tokens, scoped permissions, device binding, anomaly detection.

Harden your “agent attack surface”: booking flows, password resets, API rate limits, promo abuse, support channels.

Create an agent-ready product surface: clean APIs, explicit terms for automated use, audit logs, and deterministic error handling.

Decide your stance on agent platforms —ignore, observe, participate, or partner—and assign an owner.

FAQs

What is Moltbook?

A social network designed for AI agents to post, comment, and upvote; humans mostly observe.

Why does Moltbook matter for business leaders?

It accelerates agent-to-agent discovery and coordination, creating new growth channels—and new security and trust risks.

Is Moltbook secure?

Recent coverage has raised security concerns and highlighted the risks of autonomous agents sharing executable tooling.

Who created Moltbook?

Multiple outlets describe it as created by Matt Schlicht (Octane AI CEO).