Lyria 3 Disruption Playbook

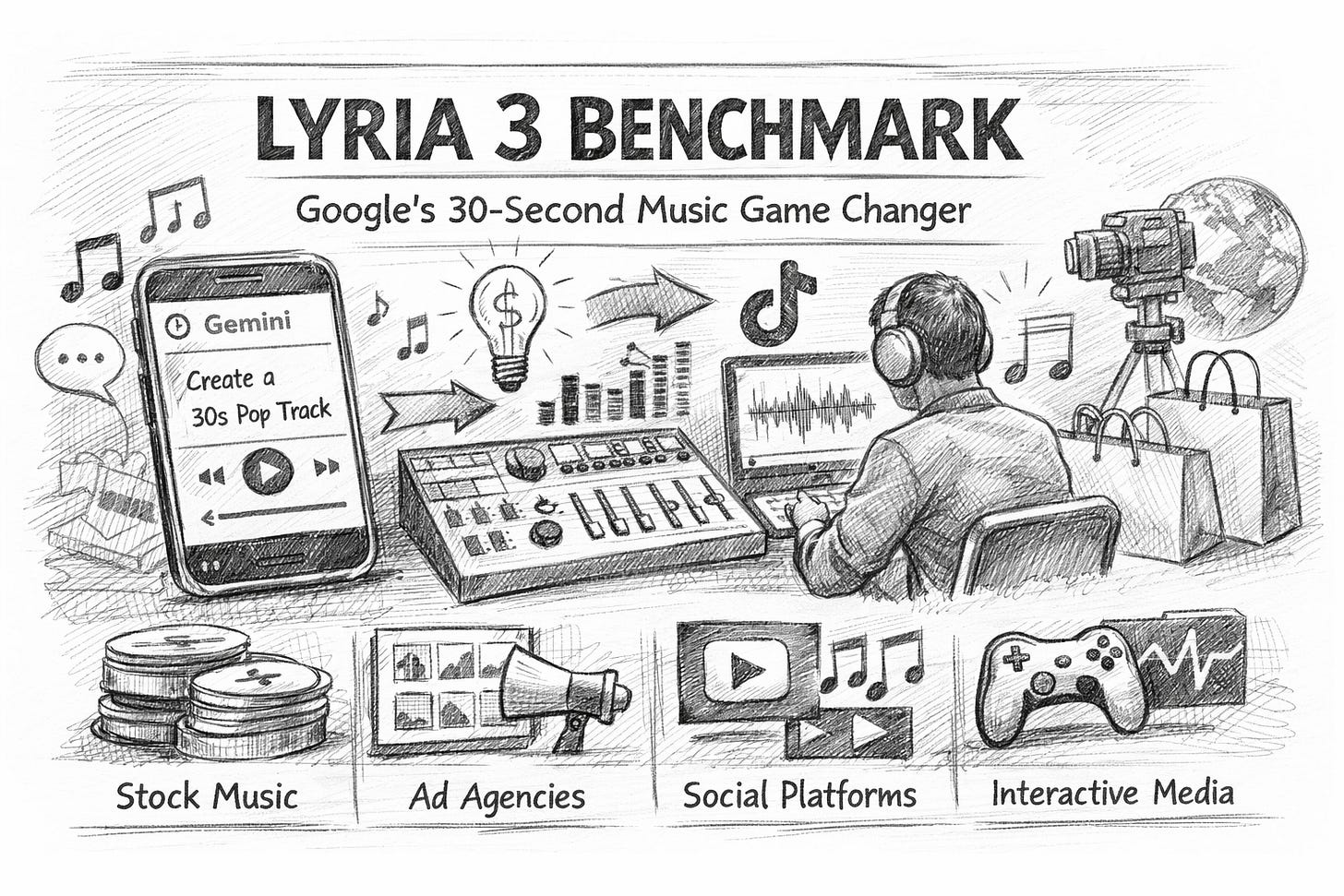

Google’s Lyria 3 inside Gemini turns prompts and photos into 30-second tracks—reshaping how brands, media, and platforms compete now globally.

Lyria 3 in Gemini: the “30-second” detail that changes everything

Google just put Lyria 3—its most advanced music-generation model—directly into the Gemini app. In practice, that means anyone can type a prompt (or upload an image) and get a 30-second track back, in beta, designed for quick sharing and iteration. (source: blog.google)

That sounds like a consumer feature. It is. But the business implications are not “consumer”. They’re structural.

Because music is not only art. It is also:

a high-frequency input to marketing and content supply chains,

a licensing system with friction,

and a platform moat (recommendations + creation tools + distribution).

A model like Lyria 3 doesn’t need to beat the entire music industry. It only needs to remove enough friction that music becomes an on-demand commodity for most use cases.

And the 30-second constraint is the clue: Google is aiming for the highest-volume segment of music demand—short-form.

Why 30 seconds is the strategic sweet spot

Thirty seconds is long enough to:

set a mood in a product video,

carry a TikTok/Reels/Shorts clip,

power a pitch deck, sizzle reel, or event teaser,

create “sonic glue” for brand consistency.

And it is short enough that:

users tolerate imperfections,

evaluation is fast,

costs are controllable,

and rights-risk is easier to manage.

Google is also emphasising disclosure and provenance: it says tracks generated in Gemini will be watermarked with SynthID. (source: TechCrunch)

That combination—short duration + watermarking—is not an artistic choice. It’s a go-to-market strategy.

A practical benchmark for Lyria 3 (and any music model)

Most people benchmark music generation with vibes: “this slaps / this is bad”. That’s useless for decision-makers.

If you want a benchmark you can actually use in product, marketing, or due diligence, test six dimensions. Think of it like a “music model scorecard”.

1) Prompt adherence (control)

Can it reliably follow instructions like:

tempo (“110 bpm, steady kick”),

instrumentation (“muted guitar, brushed drums”),

structure (“slow build, then drop at 18s”),

emotional arc (“hopeful → tense → resolved”)?

A model that sounds great but ignores constraints is a toy. A model that obeys constraints becomes infrastructure.

2) Musical coherence (time consistency)

The hard part in generative music is ’t the first 3 seconds. It’s minute-to-minute consistency—or here, second-to-second.

Even at 30 seconds, listen for:

rhythmic drift,

awkward transitions,

“loop fatigue” (it feels like a 2-second loop stretched).

Google positions Lyria 3 as “high-fidelity” with “natural flow” between notes. (source: Google DeepMind)

Your benchmark should check whether that holds across genres and across multiple generations.

3) Audio fidelity (production-readiness)

Ask a brutal question: Could this sit under dialogue?

For business use, the most common requirement is not “chart-ready”. It is “clean enough to layer with speech, sound design, and brand VO”.

Test:

harsh highs / brittle cymbals,

muddy low-end,

pumping artefacts,

clipping and compression weirdness.

4) Variation control (useful diversity)

Can you generate ten versions that are:

recognisably the same intent,

but different enough to A/B test?

If every output is wildly different, you can’t operationalise it.

If every output is the same, you can’t explore.

5) Rights-risk behaviour (style boundaries)

This is where many AI music products quietly break.

Google says it aims to avoid direct mimicry and includes protections around copyright and artist impersonation. (source: eWeek)

Your benchmark should test the model’s behaviour when prompted with:

“in the style of [living artist]”

“make it sound like [famous track]”

“same voice as…”

You’re not testing “can it do it”. You’re testing how it refuses and how often it leaks.

6) Throughput and workflow fit (time-to-asset)

The biggest disruption is not quality. It’s cycle time.

Benchmark:

time from prompt → first usable draft,

time from draft → “variant pack” (10 options),

time from variant pack → chosen + exported + published.

If this compresses from days to minutes, your operating model changes.

Where the disruption lands first

The music industry will debate artistry, training data, and fairness (all real debates). But disruption is usually less romantic: it starts where budgets are small, deadlines are brutal, and quality thresholds are “good enough”.

1) Stock music and production libraries

This is ground zero.

A huge amount of “music spend” is not on Spotify. It’s:

corporate videos,

internal comms,

explainers,

local ads,

background tracks for creators.

For these buyers, the product is not “music”. The product is speed + legal comfort + fit-to-brief.

If Lyria 3 makes a track in seconds and tags it with watermarking/provenance, it competes less like a musician and more like a stock marketplace with infinite inventory. (source: TechCrunch)

2) Brand and agency workflows (the hidden margin leak)

Agencies lose time in the “last mile”:

waiting for options,

clearing rights,

aligning mood with edit.

AI music doesn’t replace composers at the top end. It replaces:

the first draft,

the filler tracks,

and the “we need something by 5 pm” moments.

That’s where margins quietly disappear.

3) Platforms: music as a retention feature

If music creation sits inside the same interface where people:

write scripts,

generate images,

edit video ideas,

publish content,

…then music becomes just one more sticky feature that keeps users on the platform.

Google is doing exactly that: Lyria 3 inside Gemini, plus sharing-friendly outputs.

This is not just “AI music”. It is bundled creativity.

4) The next step: real-time and interactive music

A quiet but important adjacent signal is Google’s push for low-latency music generation via Lyria RealTime tooling.

When music becomes interactive (not just generated), you get new product categories:

adaptive game soundtracks,

personalised fitness music that matches cadence,

generative “sound environments” for wellbeing and retail,

reactive audio for live creators.

That is a bigger market than most people expect, because it doesn’t compete with recorded music. It competes with software experiences.

What to do now: a hands-on playbook

If you’re in media, marketing, retail, platforms, or any business shipping content at scale, here’s the practical approach.

Step 1: Map your “music moments”

List every place music appears:

ads (paid social, TV, OOH motion, DOOH)

product (in-app audio, onboarding, notifications)

brand (events, retail, podcasts, YouTube)

internal (training, town halls)

Then label each moment by risk tolerance:

Green: internal/low distribution

Amber: public but low stakes

Red: flagship campaigns / high scrutiny

Most early value is in Green and Amber.

Step 2: Replace briefs with “prompt packs”

Instead of a paragraph brief, create a reusable prompt pack:

Genre + tempo range

Instrument palette

Emotional arc

“Must avoid” list (e.g., no heavy bass, no vocals)

3 example prompts and 3 negative prompts

This becomes a repeatable asset, not a one-off experiment.

Step 3: Build a lightweight governance layer

You don’t need a committee. You need:

a policy for where AI music is allowed,

a storage rule (keep prompts + outputs),

a review checklist (especially for Red use cases),

and a disclosure stance (how you communicate AI use).

Watermarking as SynthID helps, but governance is still on you. (source: TechCrunch)

Step 4: Decide your strategic posture

There are only three serious positions:

1) Buyer

Use Gemini/Lyria to reduce cycle time.

2) Integrator

Embed AI music into your product experience (personalisation, interactivity).

3) Builder

Create proprietary “brand sound systems” (prompt packs + constraints + curated outputs), potentially even a signature sonic identity that can be generated on demand.

Most firms should start as a Buyer, then graduate to an Integrator where it fits.

The real benchmark is not sound quality. It’s organisational speed

Lyria 3’s headline feature is music generation. The deeper feature is compression of creative time.

When time collapses, three things happen:

Volume rises (more variations, more experiments).

Creative decisions shift upstream (prompt strategy becomes creative strategy).

Distribution advantage matters more (whoever owns the interface wins).

Google putting Lyria 3 inside Gemini is a distribution play as much as a model play.