From Homework Helpers to Stock Pickers: How AI Is Quietly Rewriting Everyday Decisions

AI is no longer experimental; it is shaping study habits, creativity, and financial choices daily.

AI is no longer confined to research labs or productivity dashboards. It is increasingly embedded in how people study, create, and even evaluate financial opportunities. What is changing is not just access to AI, but who relies on it, and for what kinds of decisions.

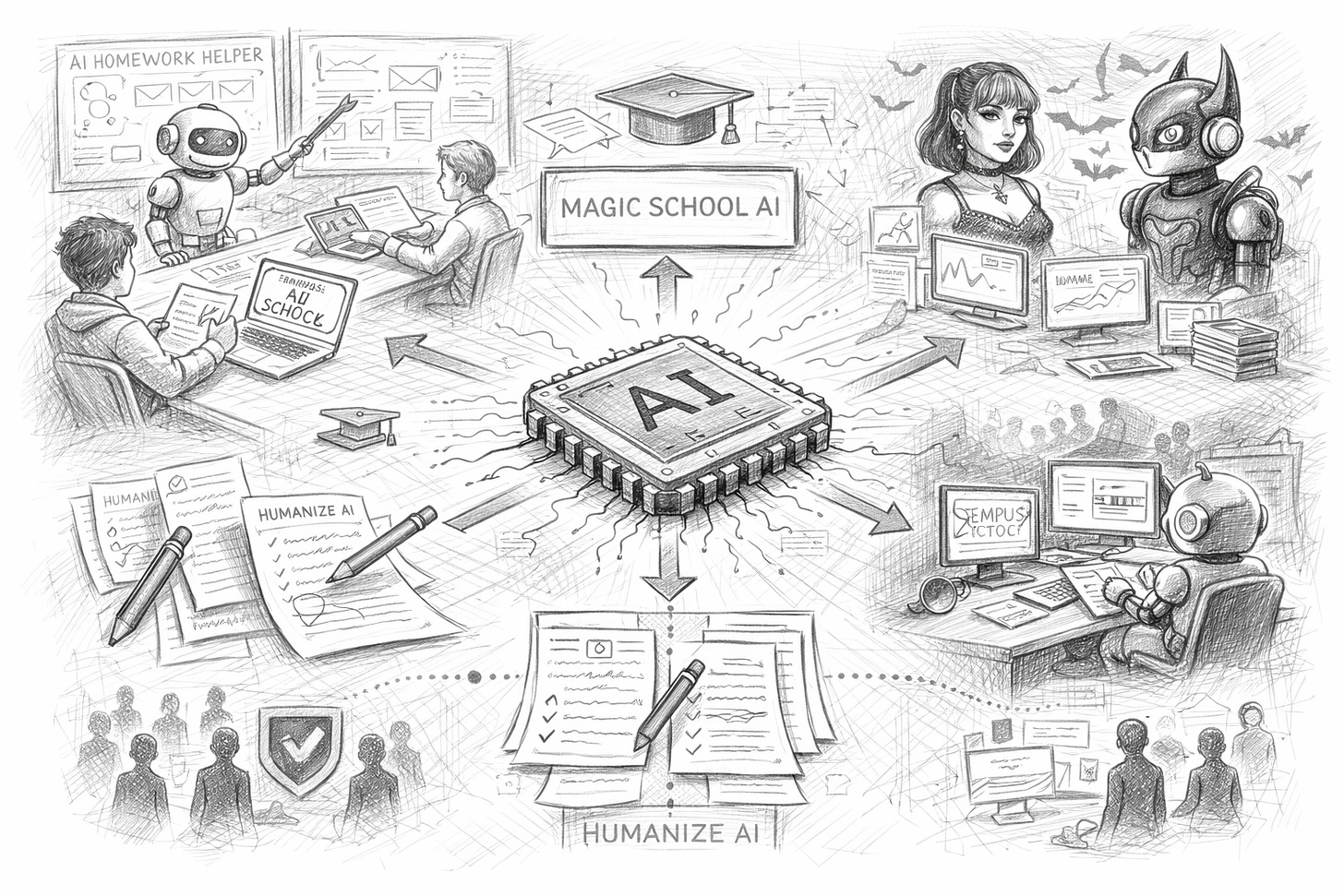

From education platforms like Magic School AI and tools positioned as AI homework helpers to financial interest in Tempus AI stock, and even creative or niche communities experimenting with concepts such as Goth AI, we are seeing AI expand into highly personal and practical domains.

This is not about automation at scale. It is about micro-decisions at scale — thousands of small choices made every day with algorithmic assistance.

AI in education: from tutoring to dependency

Tools branded as AI homework helpers are increasingly positioned as study companions rather than answer generators. The promise is efficiency, clarity, and personalised explanations.

Platforms such as Magic School AI take this further by offering structured lesson planning, assessments, and classroom support for educators, not just students.

The strategic shift is important:

AI is becoming part of learning design, not just learning shortcuts.

Teachers are using it to scale feedback and preparation.

Students are using it to scaffold understanding, not only to complete tasks.

The risk, however, is subtle. When AI becomes the default first step, learning can shift from exploration to optimisation — choosing the fastest path rather than the deepest understanding.

For education systems, the challenge is not banning AI, but teaching how to work with it critically.

Financial curiosity meets algorithmic confidence

Interest in Tempus AI stock reflects a broader phenomenon: retail investors increasingly view AI companies not only as technology providers but also as indicators of where entire sectors may be heading.

This blends two behaviours:

tracking AI as an industry narrative

treating AI-driven firms as signals of future healthcare, biotech, or data infrastructure growth

The concern here is not speculation itself, but overconfidence in technical mystique. When companies are labelled “AI-first”, they often receive credibility that may or may not align with their actual fundamentals.

For investors and analysts, this raises a governance question:

Is AI being evaluated as a tool, or as a story?

When AI becomes part of the brand, scrutiny must increase — not decrease.

Creative identity and niche AI cultures

Not all AI adoption is about productivity or finance. Communities are experimenting with highly specific creative identities, including concepts branded as goth ai, blending aesthetics, subcultures, and generative media.

This matters because it shows how AI is becoming:

a stylistic collaborator

a cultural amplifier

a way to scale personal expression

In these spaces, AI is not replacing creators. It is extending their visual language and accelerating experimentation. The line between tool and co-creator becomes less technical and more emotional.

Which also explains why debates over authenticity are intensifying.

The persistence of “humanise” tools

Alongside creative expansion, there is still a strong interest in rewriting and stylistic masking, through services framed as humanise, humanise ai, and humanise ai free.

The underlying motivation is rarely deception for its own sake. More often, it reflects:

fear of being penalised for using AI

institutional rules that lag behind reality

anxiety about originality and authorship

However, heavy reliance on rewriting tools can reduce accountability. If content is continuously filtered to “feel human”, it becomes harder to trace intent, errors, and responsibility.

In professional environments, this creates a paradox:

AI is adopted for speed, but hidden for safety.

Long-term, this tension is not sustainable.

Automation for tasks, delegation for judgment

Tools such as Gauth AI, Clawdbot AI, and Clawdbot illustrate another shift: automation is moving from simple execution into task orchestration.

Rather than just answering questions, these systems:

retrieve information

interact with multiple services

guide step-by-step processes

This is not full autonomy, but it is delegated competence.

Users are no longer asking, “What is the answer?”

They are asking, “Handle this for me.”

That distinction matters because when AI starts acting across tools and platforms, error impact increases. A wrong answer is inconvenient; a wrong action can be costly.

As agents become more capable, oversight becomes more important, not less.

The new normal: assisted living, not artificial intelligence

Across education, creativity, finance, and personal productivity, the pattern is consistent: AI is not replacing humans, but it is reshaping how humans make decisions.

People are:

studying with it

creating with it

investing while tracking it

delegating small but meaningful actions to it

The strategic implication is clear.

The next phase of AI adoption is not about smarter models.

It is about smarter integration into everyday judgment.

And the organisations that will struggle most are not those without AI tools, but those without clear rules for:

when to trust them

when to verify them

and when to ignore them

Because in a world of constant assistance, discernment becomes the real competitive skill.

Hey, great read as always. This piece really makes me think about what you wrote last time regarding AI and the future of critical thinking. As a math teacher, I'm seeing exactly this with my students. The distinction you make between exploration and optimisation is spot on, and so important for educators to grapple with. Really appriciate your insights!