Empathy isn’t just for humans anymore?

The paper “Large language models are proficient in solving and creating emotional intelligence tests” (Schlegel, Sommer, & Mortillaro, 2025) explores a revolutionary idea: AI systems, particularly large language models (LLMs), are outperforming humans in tasks that measure emotional intelligence (EI). Traditionally seen as uniquely human, empathy and emotional reasoning are now being demonstrated—at least cognitively—by machines like ChatGPT-4, Claude 3.5, DeepSeek V3, and Gemini 1.5.

AI systems, particularly large language models (LLMs), are outperforming humans in tasks that measure emotional intelligence (EI).

Key Findings of the Study

AI Outperforms Humans in Emotional Intelligence Tests:

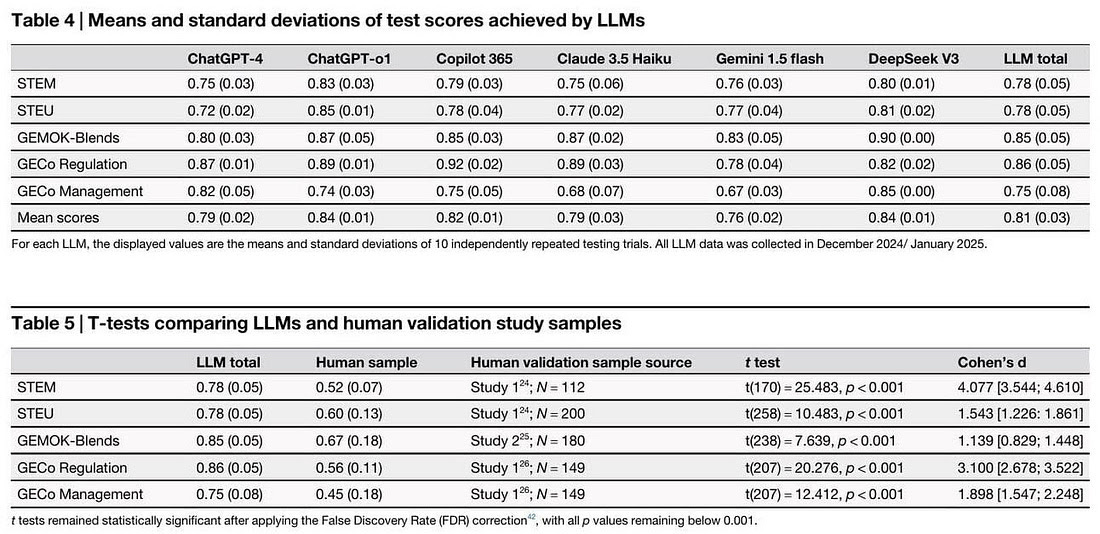

Across five widely validated EI tests (STEU, STEM, GEMOK-Blends, and GECo’s emotion regulation and management subtests), LLMs achieved an average accuracy of 81%, far exceeding the 56% human average.

ChatGPT-o1 and DeepSeek V3 performed particularly well, scoring over two standard deviations above human benchmarks.

Cognitive Empathy in AI:

LLMs demonstrated cognitive empathy by accurately reasoning about emotions, their causes, and effective regulation strategies.

AI-generated EI tests (created by ChatGPT-4) were found to have comparable quality to human-designed tests, with similar difficulty levels and strong correlations (r = 0.46) between original and AI-generated versions.

Human Perception of AI-Created Items:

Participants rated ChatGPT-generated scenarios as slightly clearer and more realistic, though less diverse in content compared to human-written ones.

Psychometric properties such as reliability (Cronbach’s alpha) and construct validity were largely similar, though ChatGPT-generated tests had slightly weaker correlations with external measures like vocabulary or other EI tests.

Implications for AI Applications:

These findings suggest that AI could support emotionally demanding tasks in healthcare, leadership, therapy, and customer service, where consistency and emotional reasoning are key.

LLMs' ability to create psychometrically valid assessments could transform test design and reduce the time and costs traditionally required for developing emotional intelligence evaluations.

Image: From the paper “Large language models are proficient in solving and creating emotional intelligence tests” (Schlegel, Sommer, & Mortillaro, 2025)

Discussion and Implications

The study challenges the belief that empathy and emotional intelligence are exclusive to human cognition. While AI lacks affective empathy—the subjective experience of sharing someone’s feelings—it can simulate cognitive empathy to a degree that surpasses average human performance.

This has two major implications:

For Human-AI Interaction: AI-powered agents can enhance communication, conflict resolution, and emotional support in contexts like telemedicine or online education, often performing more consistently than humans, who are influenced by stress, fatigue, or bias.

For Psychometrics: ChatGPT’s ability to create valid EI assessments indicates that AI could assist researchers and practitioners in generating high-quality psychological measurement tools at scale.

However, the authors caution that AI’s performance in real-world emotional interactions—often ambiguous and culturally nuanced—remains underexplored. Furthermore, the study highlights that the reasoning of LLMs is influenced by the Western-centric datasets on which they are trained, potentially limiting their cross-cultural validity.

Limitations

Contextual and Cultural Bias: Emotional norms vary significantly across cultures, and LLMs may not yet effectively navigate this complexity.

Black Box Mechanism: The process by which LLMs arrive at correct emotional interpretations remains opaque.

Real-World Performance: Structured test performance does not guarantee similar success in free-flowing human conversations.

The research suggests that AI is rapidly encroaching on a domain once believed to be intrinsically human—emotional intelligence. While machines do not “feel,” they can understand emotions with remarkable accuracy and consistency. This study represents a paradigm shift in how we view empathy and emotional reasoning in AI, pointing toward a future where emotionally intelligent machines could complement or even surpass humans in socially sensitive domains.

Large Language Models, like ChatGPT, mimic patterns of human behaviour by statistically predicting the most plausible response, based on vast amounts of data.

While this groundbreaking study demonstrates that AI can outperform humans on emotional intelligence tests and even generate valid EI assessments, it is crucial to emphasise a fundamental truth: these systems are not conscious, self-aware, or truly empathic. What we see is inference, not feeling. Large Language Models, like ChatGPT, mimic patterns of human behaviour by statistically predicting the most plausible response, based on vast amounts of data. They do not "understand" emotions in the human sense, nor do they feel what others feel.

This distinction—between simulated cognition and real experience—is more than academic. If we mistake machine inference for genuine understanding, we risk anthropomorphising AI, attributing intentions and awareness where none exist. This has profound ethical implications, particularly in contexts such as therapy, healthcare, or leadership, where genuine empathy is crucial.

It is crucial to emphasise a fundamental truth: these systems are not conscious, self-aware, or truly empathic. What we see is inference, not feeling.

As we explore this fascinating convergence of machine intelligence and human emotional capacities, we must keep asking the deeper questions:

What does it mean for a machine to "understand" emotions?

Are we simply building sophisticated mirrors of our own behaviour?

What are the risks of replacing or outsourcing truly human interactions to systems that only simulate emotional connection?

These are not questions with simple answers—and they deserve both time and space in our book. This study serves as a powerful starting point, but the conversation about the boundaries between human empathy and artificial mimicry is only just beginning.