Altman’s Superintelligence Manifesto

Altman’s “people first” manifesto looks generous. In practice, it could centralise power, unless governments set tougher rules now.

The “People First” pitch is also a power play

Sam Altman has started saying the quiet part out loud: superintelligence is close, disruption is unavoidable, and politics will have to catch up fast. Axios framed it as a kind of “New Deal” for the superintelligence era. (Axios)

OpenAI’s 13-page document, “Industrial Policy for the Intelligence Age: Ideas to Keep People First” (April 2026), is designed as a conversation starter but also an attempt to define the agenda before governments do. Download it here:

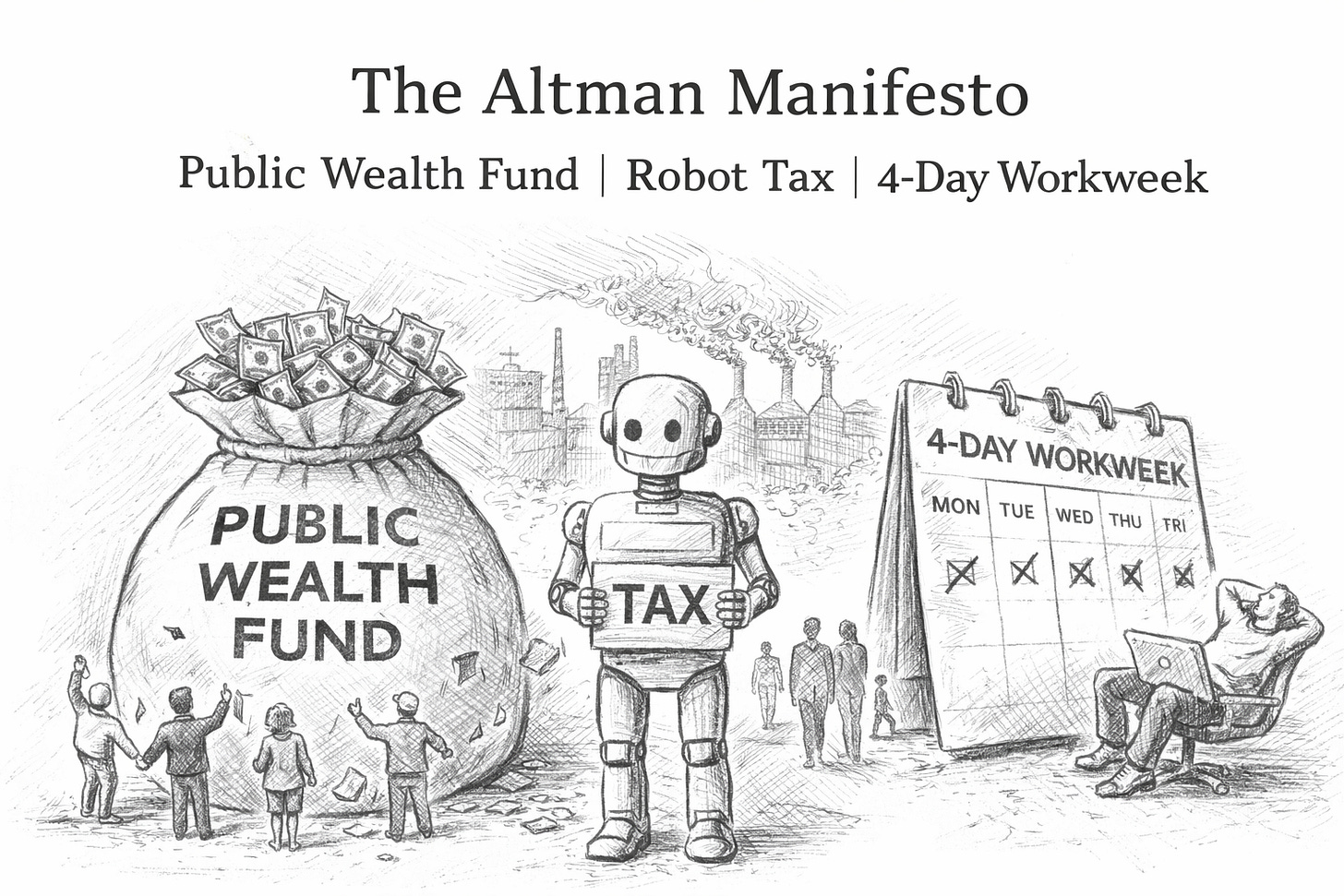

The plan clusters around three headline ideas that sound worker-friendly:

Public Wealth Fund (a national fund investing in AI-era growth, paying citizens dividends)

Robot / automated labour tax (modernise the tax base as payroll taxes erode)

Four-day workweek pilots funded by “efficiency dividends” (32 hours, no pay cut, maintain output)

Each idea can be sensible. Each can also be used to launder a deeper shift: the concentration of intelligence, capital, and bargaining power into a small number of frontier AI firms, then redistributing a politically acceptable fraction later.

If you’re running a business, advising one, or financing one, the practical question is not “is this kind?” It’s:

What would make these policies real, measurable, and hard to game, without turning them into a licence for monopoly?

1) Public Wealth Fund: the dividend is the marketing; the governance is the battleground

The Public Wealth Fund proposal is simple: seed a large national fund, invest in diversified assets (including AI companies and AI adopters), and distribute returns to citizens.

It becomes a social contract for accepting concentration

A public dividend can make the public tolerate a winner-takes-most AI economy, especially if the narrative is: “Yes, a few firms will capture huge value, but everyone gets a cheque.”

That is politically elegant. It is also strategically convenient for frontier labs.

The key design question: what is the fund actually buying?

If the fund mostly holds public equities and broad indices, it will behave like a national pension-style portfolio. Fine—but then it’s not directly correcting AI concentration. It’s just letting citizens ride the same market wave.

If the fund is seeded with special access—equity, warrants, compute credits, or licensing rights from the most powerful AI builders, it becomes much more consequential. But that is where governance becomes explosive:

Who decides which models count as “frontier” and therefore owe contributions?

Who values private stakes in fast-moving AI companies?

Who prevents regulatory capture (the fund becoming dependent on the firms it should discipline)?

A “people first” version that actually bites

If policymakers take this seriously, the fund should be paired with competition rules and public option capacity; it’s a legitimacy shield.

A practical blueprint executives should anticipate:

Contribution triggers tied to measurable thresholds: revenue share, compute scale, or model capability tiers (rather than voluntary pledges).

Anti-capture governance: independent board, transparent mandate, mandatory disclosures on holdings and conflicts.

Use dividends as stabilisers, not bribes: e.g., automatic top-ups when displacement metrics spike (mirroring the paper’s preference for automatic stabilisers in safety nets).

2) Robot tax: the revenue isn’t the point; the incentive design is

OpenAI argues that the tax base may shift away from labour income (and payroll taxes) towards corporate profits and capital gains as AI reshapes work, so tax systems must adapt—including by exploring taxes on automated labour.

The robot tax debate is old. The hard part has always been implementation: what counts as a “robot”, and how do you avoid punishing productivity? A useful primer on the pitfalls is the Tax Policy Centre’s analysis of “robot tax” logic.

Companies will automate in ways that are hard to tax

If you tax “robots” as physical assets, automation shifts into software and process redesign. If you tax “AI usage”, firms route usage through vendors, offshore entities, or bundled services.

So the most workable versions tend to tax outcomes, not “robots”:

Excess profits in specific sectors with rapid AI substitution

Windfall gains linked to AI deployment at scale

Capital income at the top end (where the upside concentrates)

This aligns with OpenAI’s own hint: rebalance towards capital-based revenues and consider targeted measures to sustain AI-driven returns.

A “people first” version that doesn’t kill adoption

If you’re advising boards, assume policy will move towards “retain, retrain, or pay” structures.

Concretely, expect mixes of:

Wage-linked credits for retention and retraining (the paper explicitly points to R&D-style incentives).

Transition levies that activate only when layoffs exceed thresholds (so you’re not taxing every efficiency improvement).

Sector-specific schemes (customer service, back-office operations, basic analytics) where displacement is most measurable.

For companies, this becomes a finance-and-operating-model issue:

Build a workforce transition P&L line now (training, redeployment, severance, outplacement) so you’re not improvising under a future levy.

Treat “automation ROI” as after-policy ROI—scenario it like you would carbon pricing.

3) Four-day workweek: This is less about kindness than about control of productivity gains

The document proposes “efficiency dividends”: convert AI-driven efficiency gains into better benefits and time back—explicitly including time-bound 32-hour/four-day workweek pilots with no loss in pay, maintaining output and service levels.

This is not fringe. The UK has already produced credible evidence from large trials that many organisations can hold output steady with fewer hours, often by cutting meeting waste and tightening workflows. The UKRI summary of research outcomes is a useful public-sector-facing reference point. (The Autonomy Institute)

A four-day work week becomes a stealth work-intensification tool

Plenty of firms “compress” work into fewer days by increasing pace and surveillance. Employees get a “benefit” that quietly demands always-on performance.

That’s why OpenAI’s framing is revealing: pilots must “hold output and service levels constant”.

Read that again. The assumption is that the baseline is not reduced output; it’s the same output, delivered in less time.

The corporate reality: You will be asked to prove where the productivity went

In an AI-heavy organisation, a four-day work week is a governance mechanism: it forces leadership to decide whether AI productivity gains flow to:

shareholders (margin expansion),

customers (price cuts),

employees (time back/benefits), or

reinvestment (growth).

The “people first” promise only works if you can credibly measure and share gains.

Practical playbook:

Run 90-day pilots in functions with measurable throughput (support, finance ops, reporting, marketing ops).

Define “output” properly (quality-adjusted, not just volume).

Use the pilot to delete work, not compress it: meeting bans, decision rights, template-first documentation, fewer approvals.

Hard-stop AI-induced scope creep (“because it’s faster, do more”)—this is the classic failure mode.

The hidden centre of gravity: infrastructure, energy, and bargaining power

One of the most concrete parts of the manifesto is not dividends or taxes—it’s infrastructure: grid expansion and powering AI.

That’s where the real leverage lies, because whoever controls compute supply chains (energy, chips, data centres, permitting) controls the pace of AI deployment.

For investors and corporate strategists, the manifesto is signalling where political capital may go:

grid acceleration

public-private financing models

“AI should pay its way” narratives around energy costs

This matters because it shapes your growth costs. AI is not only software. It is industrial capacity.

What to watch next (and what to do now)

If Altman’s framing sticks, you should expect policy packages, not single policies: wealth fund + tax base shift + labour standards + safety nets + energy buildout.

Three immediate “board-level” actions that are low-regret:

Model your “AI displacement exposure” by role family (task substitution, time-to-automate, redeployability).

Pre-build your response: retraining pathways, internal talent marketplaces, redeployment budgets.

Track policy triggers: where you operate, which jurisdictions are likely to pilot automated-labour taxes or incentivised reduced-hours schemes.

The point is not to predict one law. It’s to avoid being the company that looks surprised when the social licence for automation gets renegotiated.

Altman is right about one thing: upheaval is coming. The question is whether “people first” becomes a democratic redesign of the contract or a polite ribbon tied around a new concentration of power.