AI Tokenomics: Which model is best?

Pick the wrong model and you burn cash, time, and trust; pick well and ship reliable AI products faster today.

Most “AI strategy” conversations I have with my clients still start in the wrong place: Which model is best? That question changes weekly. Worse, it often produces the most expensive architecture you can buy.

A better question is: What does the model need to see, what must it produce, and what does failure cost?

We should treat models like production components: tokens in, tokens out, tools and documents in the middle, and lots of trade-offs you can actually measure.

Below is a hands-on way to think about models that will hold up even as “best model” leaderboards churn.

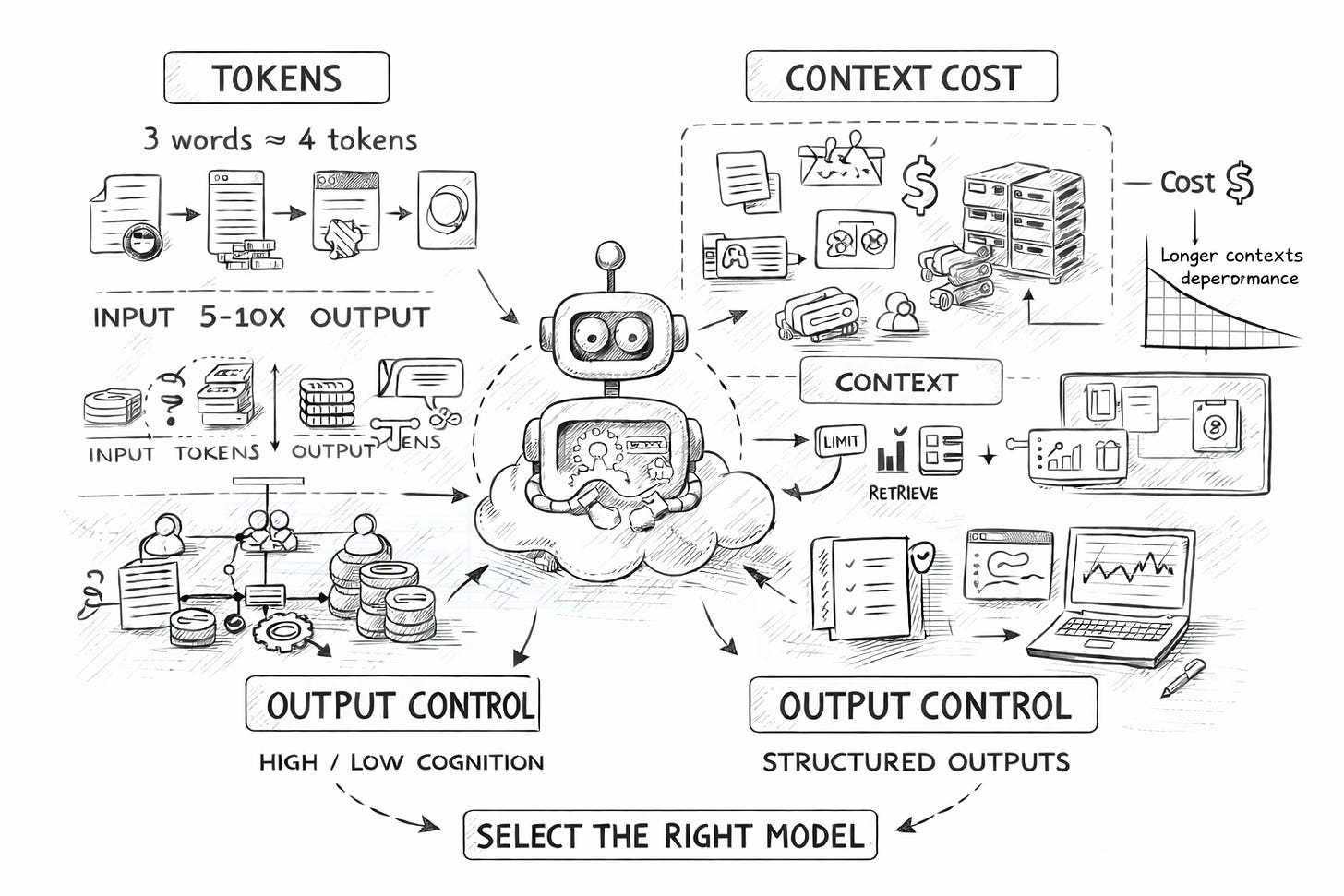

1) Start with the real unit of work: tokens

Every model decision is a pricing decision, a latency decision, and a quality decision disguised as “AI”.

Models do math with numbers, not words, so everything is represented as tokens via tokenisation.

Rule of thumb: 3 words ≈ 4 tokens.

Why this matters:

Your margin gets eaten by output. Output tokens are usually more expensive, and most workloads skew heavily toward input volume, often with input: output ratios of 5–10x.

Your UX gets eaten by “thinking”. Reasoning-heavy modes can be brilliant, but they also add latency because the model effectively does more work before speaking.

Your P&L gets eaten by context bloat. It’s easy to shove everything into a prompt; it’s hard to justify that invoice later.

A simple discipline that pays off immediately: treat tokens like cloud spend. Put them on a dashboard with:

average input tokens per request

average output tokens per request

p95 latency

cost per successful task completion (not cost per call)

If you can’t track those, “model choice” is theatre.

2) Understand what the model actually sees (and charge it rent)

A model doesn’t “read your app”. It sees a bundle:

system + user prompts

tool definitions/tool responses

images or audio (if used)

retrieved documents (RAG)

This list is your leverage. Because most quality failures come from one of two things:

The model didn’t have the right data.

The model had too much data, and the right bit got diluted.

Unsexy truth: context windows are large, but performance still degrades as sequence length increases.

So don’t treat a 200k/1M context window as permission to be sloppy. Treat it as a buffer you protect.

Practical “context rent” rules:

If a piece of context isn’t used in the next 1–3 turns, it doesn’t belong in the prompt.

Prefer retrieval over “mega prompts”.

Summarise aggressively, but only after you have evals (more on that later).

3) Reasoning models are not “smarter”, they are more expensive cognition on demand

Reasoning models “think before they answer”, generating hidden reasoning tokens, with more reasoning → more latency.

Not all reasoning models behave the same, and some allow you to control reasoning intensity.

Here’s the non-obvious insight: Reasoning is a feature flag, not a personality trait.

Use high reasoning when:

the task has many dependent steps (planning, multi-hop logic, agent workflows)

the cost of a wrong answer is high (regulatory summaries, financial calculations, critical operations)

Use low reasoning when:

you’re doing high-volume, low-stakes tasks (classification, routing, templated responses)

speed matters more than novelty

A common mistake: deploying a reasoning-heavy model everywhere “for safety”. That usually creates the opposite: slower experiences, more user impatience, and pressure to cut corners elsewhere.

4) Output control is where “AI demos” become “AI products”

If you want AI in workflows (calendar events, CRM updates, deal screening), your core problem is not “intelligence”. It’s the reliability of outputs.

This is not just an engineering convenience. It’s a commercial unlock:

Procurement cares about determinism.

Compliance cares about traceability.

Operations cares about error rates at scale.

If you want a concrete reference, Anthropic documents the structure of its outputs and how they combine with strict tool use to validate parameters. (platform.claude.com)

In practice: design your AI feature as if it were an API integration.

define a schema

validate hard

retry with constraints

log failures as product signals (not “model weirdness”)

5) Multimodal is powerful, but treat images/audio like premium bandwidth

512×512 images can be ~1,000 tokens, scaling with the number of pixels.

That guidance is strategically important: multimodal can be a cost trap.

As the user’s intent is text, convert everything to text as early as possible (OCR/ASR) and keep images out of the loop.

If the image is the product (insurance claims, defect detection, radiology triage), keep it multimodal but tightly scoped: crop, compress, and ask the model specific questions.

Google’s Gemini API pricing and model line-up is a useful place to sanity-check multimodal cost assumptions. (Google AI for Developers)

6) Self-hosting: do the breakeven maths before you do the architecture

Think about renting GPUs (8× H100 at $2/hr/GPU) versus paying per-token for an API model, landing at billions of tokens before self-hosting breaks even.

More DevOps, unreliable GPUs, questionable privacy gains compared to managed enterprise platforms, and the need for a consistent source lag.

The non-obvious executive takeaway:

Self-hosting is rarely a cost strategy. It’s usually a control strategy.

Choose it only if you can name:

data residency constraints you can’t satisfy otherwise

extreme latency constraints at the edge

offline / air-gapped requirements

bespoke model behaviour you can’t get via prompting + tools

If your reason is “it’ll be cheaper”, you’re likely about to build an expensive GPU hobby.

7) Finetuning: assume “no” until you’ve exhausted cheaper levers

Teams often say “we have proprietary data, we should finetune”, but the advice is don’t do this first, because it takes far more effort than expected, especially in data engineering, and frontier models are strong at in-context learning.

Before finetuning:

prompt optimisation (manual or tools like DSPy)

RAG / search

The order of tool use (including MCP-style servers) is not academic. It is the cheapest path to “better answers”:

Get the right data in (RAG)

Force the right structure out

Only then consider changing the model weights (fine-tune)

8) The strategy that survives model churn: modularity + evals + context management

Models change constantly, six-month-old choices can become irrelevant, and “best model” depends on your need.

So what should you focus on instead?

Modularity

Design your stack so you can swap models without rewriting your product. Use OpenRouter to make switching easier.

OpenRouter itself describes the unified API approach and fallbacks in its documentation. (OpenRouter)

Evals

A pragmatic starting set of evals:

correctness on 50–200 real historical cases

schema validity rate

tool-call success rate

“time to resolution” on agent tasks

human review disagreement rating

That is the play: reduce guessing.

constrain inputs

retrieve precisely

structure outputs

measure continuously

Two decisions

Pick one workflow and lock the model changes; your business logic stays stable.

Instrument tokens and failures. If you can’t see token spend and error modes, you can’t manage them, only argue about them.

That’s how you stop “AI models” from being a vendor conversation and turn them into an operating advantage.