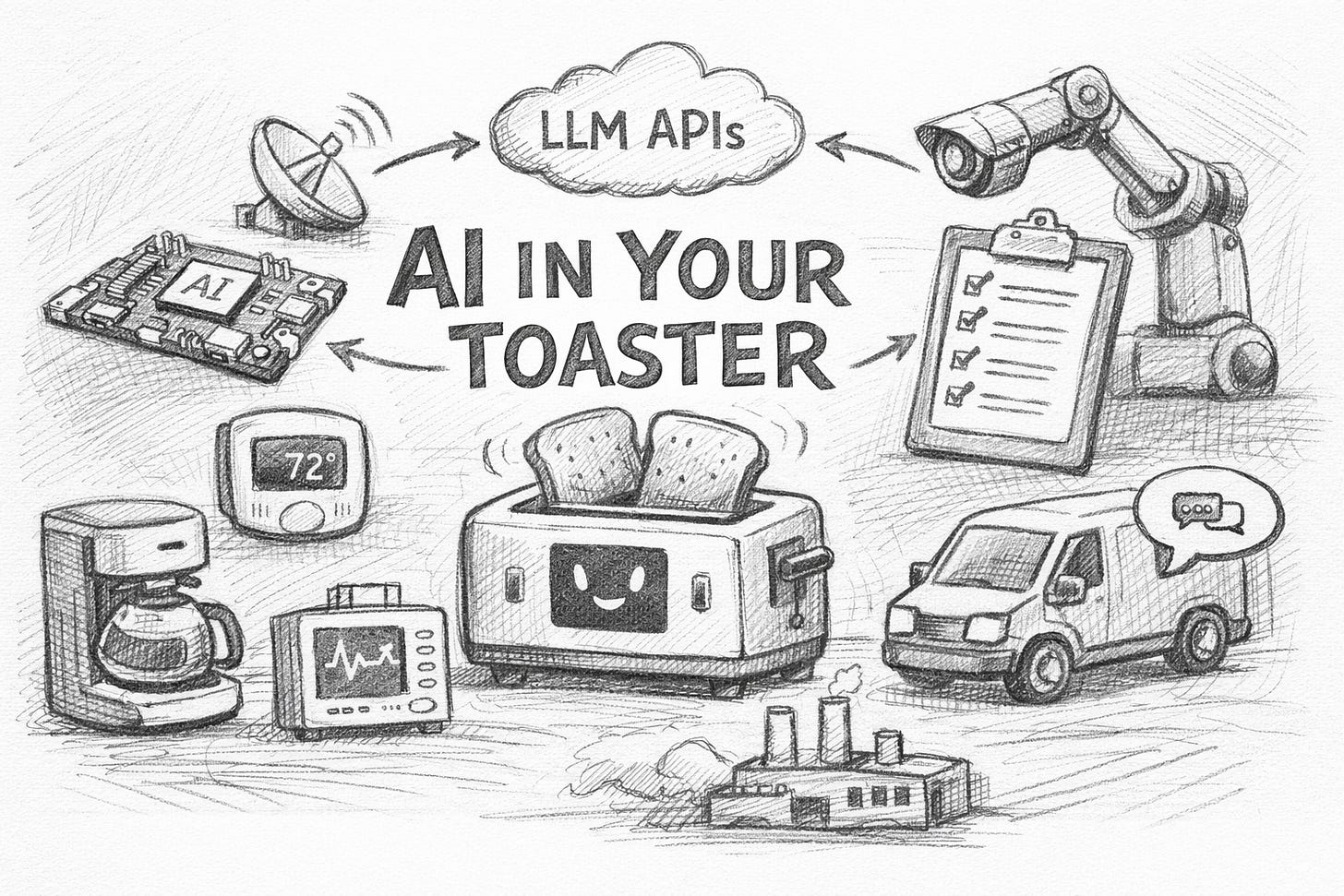

AI in Your Toaster: PicoClaw

PicoClaw shows generative AI is leaving the data center; assistants will live inside cheap devices across companies everywhere.

Most “AI assistants” still assume a server, a GPU, or at least a mini-PC. PicoClaw is different. It’s an ultra-lightweight assistant written in Go that uses under 10MB of RAM and starts in under 1 second. It ships as a single binary and targets RISC-V, ARM, and x86, from $10 embedded boards to normal servers. (PicoClaw)

That sounds like a hobby project, until you see the strategic signal: the “AI layer” is becoming a tiny, portable component that can sit almost anywhere.

What PicoClaw actually is

PicoClaw is not a small language model running on a microcontroller. It’s a local orchestration layer: a lightweight program that can live on cheap hardware and connect to external LLM APIs (e.g., GPT/Claude/GLM) for heavy reasoning, while keeping the “agent wrapper” local.

It also plugs into chat surfaces like Telegram/Discord, which is a practical hint: distribution matters as much as model quality.

Why Executives should care

The first wave of genAI was “chat in the browser.”

The second wave was “copilots inside apps.”

The next wave is genAI as embedded capability, small agents living close to where work happens:

In factories: edge boxes near machines, summarising logs, guiding technicians, triggering tickets.

In retail and branches: on-site “store ops” assistants that keep running even with limited connectivity.

In IT/security: local watchers that triage alerts, enrich events, and escalate with context.

In products: appliances, vehicles, medical devices, kiosks—anything with Linux-class compute.

PicoClaw is an example of the enabler: a “thin client” for AI that’s cheap, fast to boot, and portable across architectures.

The real implication: AI becomes infrastructure at the edge

Once the runtime is tiny, the blocker stops being a compute blocker. The blockers become:

1) Governance of LLM calls

If thousands of little agents can call LLM APIs, you need:

approved providers/models

logging and retention rules

prompt/data policies

cost controls and rate limits

2) Security and supply chain

A “small binary everywhere” is still software everywhere.

signing and update strategy

secrets management (API keys on edge devices are a real risk)

device identity and access controls

Even PicoClaw’s repo emphasizes “official channels” and security caution—because distribution attracts impersonation fast.

3) Reliability

When the model is remote, your business process depends on:

network quality

provider uptime

latency

fallback behaviour (what happens when the LLM can’t be reached?)

A practical way to think about it

Treat this emerging pattern like you treated mobile:

Start with 2–3 high-frequency workflows (triage, reporting, scheduling, customer follow-ups).

Standardise the “agent runtime” (one supported lightweight client + one policy layer).

Centralise observability (what it did, what it asked the model, what data left the device).

Build for mixed intelligence (some logic local, some delegated to LLMs).

What to do this quarter

Ask your CTO/CISO: “How many endpoints could call an LLM next year, and how do we govern that?”

Pilot one edge use case where boot time + low footprint matter (industrial PC, branch server, on-prem box).

Create an internal rule: no LLM calls without logging, policy, and key management.

PicoClaw won’t be the final platform. But it’s a clear sign that “LLMs everywhere” won’t mean GPUs everywhere. It will mean small agent runtimes everywhere, connected to models, running quietly inside the systems your company already owns.