AI-First Workflows

AI is not a faster assistant; it is a new operating model that reallocates decisions, risk, and accountability daily today.

AI-First Workflows: The Practical Playbook for Redesigning Decisions

Most organisations talk about AI as if it were a tool upgrade.

A better mental model is this: AI changes how decisions should be made, not just how fast tasks are completed.

That shift matters because most “AI projects” fail for a boring reason: teams automate the wrong things, keep the same handoffs, and add new complexity on top. They end up with more drafts, more reviews, more Slack messages, and the same bottlenecks, just faster.

The winners do something different. They redesign workflows so the right “agent” handles each decision:

Autonomous AI for low-risk, fully specified work

AI + human in the loop for quality control at scale

Human + AI assist for judgement-heavy, high-stakes decisions

This is not a tooling conversation. It is a system design.

Below is a simple, structured way to move from “workflow” to “AI-first workflow”, using six steps and a few hard questions that make allocation obvious. They are based on a very insightful webinar I attended from the Board of Innovation, along with several personal notes.

Key takeaways

AI doesn’t optimise workflows—it redesigns them. The biggest gains come from changing decision paths, not speeding up tasks.

The problem is allocation, not automation. Performance improves when each decision is routed to the cheapest agent that still meets the quality requirements.

Advantage comes from system design, not tools. Your edge lies at the interface between AI and humans—especially in escalation rules.

The AI-first workflow in six steps

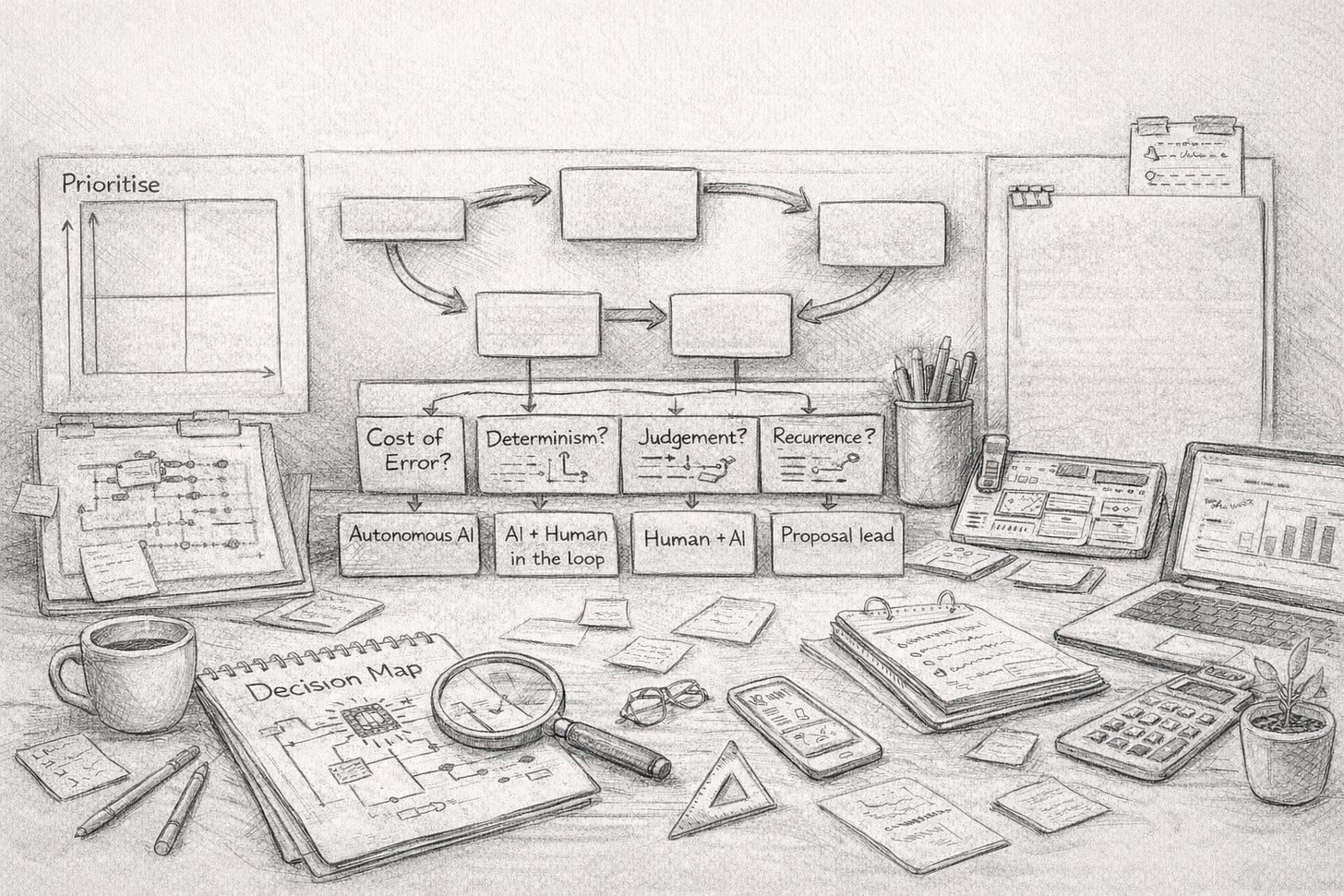

Think of this as a loop, not a one-off transformation. You map, allocate, operate, measure, and then reallocate as models and confidence improve.

Step 0 — Prioritise: choose what to redesign first

Not every workflow is worth touching. Pick one where:

There is volume (enough repetition to matter)

There are clear inputs and outputs

The workflow has visible wait states (handoffs, approvals, queueing)

The outcome affects cost, revenue, risk, or customer experience

Use a simple 2x2 to decide:

Readiness (data quality, standardisation, stable process)

Strategic leverage (impact if improved)

Start here: high readiness + high leverage.

Quick wins: high readiness + low stakes.

Invest to unlock: low readiness + high leverage (often data/process clean-up first).

Deprioritise: low readiness + low leverage.

This avoids the classic trap: spending months trying to “AI” the messiest process in the business.

Step 1 — Map the decision flow (not the process chart)

Process maps often lie because they show the “happy path”.

You want a decision map:

Every decision point (approve, reject, classify, escalate, price, route, interpret)

Every handoff (who receives it next, and why)

Every wait state (queues, missing info, ambiguity, policy questions)

A practical method: take 20 real cases from the last month and trace them end-to-end. Highlight:

Where people ask for clarification

Where work bounces back

Where quality is checked

Where exceptions appear

You are not mapping tasks. You are mapping uncertainty.

Step 2 — Define constraints and allocate work

Now allocate each decision to one of three archetypes.

The three archetypes

Autonomous AI

AI runs end-to-end. No human in the loop. Best for low-risk, fully specifiable tasks with rules.AI + human in the loop

AI produces an output; a human reviews/approves before final. Best when you need speed and control.Human + AI assist

Humans lead; AI supports with research, options, drafting, and comparisons. Best for high-stakes, judgment-heavy work.

The four questions that make allocation obvious

For each decision, answer these in plain language:

Cost of error: what happens if it’s wrong? Does it need to be right the first time?

Determinism: can you fully specify the task with clear inputs/outputs and rules?

Judgement: is tacit knowledge required (context, politics, risk appetite, relationship nuance)?

Recurrence: does this repeat with patterns, or is it mostly one-off?

A simple rule:

Low cost of error + deterministic + recurring → Autonomous AI

Medium cost of error or partial determinism → AI + human in the loop

High judgment or high cost of error → Human + AI assist

This is where most organisations unlock value: not by buying a better model, but by properly routing decisions.

Step 3 — Define escalation logic: design the AI–human interface

This is the centrepiece.

If Step 2 decides who should own a decision, Step 3 decides when ownership switches.

Borrow a proven principle from operational excellence: Jidoka—build quality into the process by detecting abnormal conditions and stopping/escalating immediately. In classic operations, it means the line can stop when something is wrong; the system prevents defects from flowing downstream. (トヨタ自動車株式会社 公式企業サイト)

In AI-first workflows, “stop the line” becomes: escalate to a human when a trigger fires.

The escalation design template

For each trigger, define three things:

Trigger condition (what the AI detects)

Escalate to (role, not person)

Information package (what the human needs to decide fast)

Example: compliance verification workflow

Trigger: “Unknown requirement” (policy gap)

Escalate to: compliance lead

Package: relevant clause + closest matches + confidence score + source referencesTrigger: “Borderline match” (high consequence if wrong)

Escalate to: bid/transaction owner

Package: exact gap + risk exposure + options + recommended wordingTrigger: “Contradictory clauses” (logic conflict)

Escalate to: proposal/legal owner

Package: conflicting sections + page refs + suggested resolution paths

The key insight: the trigger is designed, not accidental. If you do not design escalation, you get random escalations—people lose trust and revert to manual work.

This is also where governance becomes real. A framework like NIST’s AI RMF treats AI risk as a socio-technical issue: not only the model, but also how people deploy and oversee it across its lifecycle. (Publicações Técnicas NIST)

Step 4 — Design the human role (it changes)

In AI-first workflows, humans should not be “executors”.

They become:

Architects (define constraints, policies, routing)

Judges (handle exceptions and edge cases)

Trainers (label outcomes, correct patterns, improve prompts/rules)

Owners (accountable for outcomes, not outputs)

This is a shift in capability. You need fewer people doing routine production work and more people doing quality control, exception handling, and system tuning.

A practical move: rewrite role expectations in one sentence:

Old: “produce and process”

New: “supervise, decide, and improve the machine”

Step 5 — Measure outcomes and reallocate continuously

AI-first workflows create a new advantage: every AI node is measurable by default.

But you must measure the right things. The goal is not “more AI usage”. The goal is outcomes at lower cost and controlled risk.

Metrics that matter (simple and operational)

Quality: error rate, rework rate, audit findings

Speed: cycle time, time-to-first-draft, time-to-decision

Cost: cost per decision, review hours per 100 cases

Risk control: escalation rate, high-severity incident count

Trust: human override rate (and why), user satisfaction

Then do the most important part: reallocation.

As AI capability improves and your escalation design becomes reliable, tasks should migrate:

human-led → hybrid → autonomous

This is how the feedback loop compresses. It is also why process redesign beats “tool rollout”: it keeps paying you as models improve.

Recent thinking on scaling AI increasingly points to this same idea: redesign end-to-end processes and define human–AI roles, rather than chasing incremental automation. (BCG Global)

Your moat is the routing layer

Most competitors can access similar models.

Your durable advantage becomes:

your decision map,

your allocation logic,

your escalation triggers,

your measurement loop.

In other words, the workflow is the product.

If you treat AI as a chatbot bolted onto yesterday’s process, you get demos.

If you treat AI as a decision-routing system with designed escalation and continuous reallocation, you get compounding performance.

That is the difference between “using AI” and becoming AI-first.