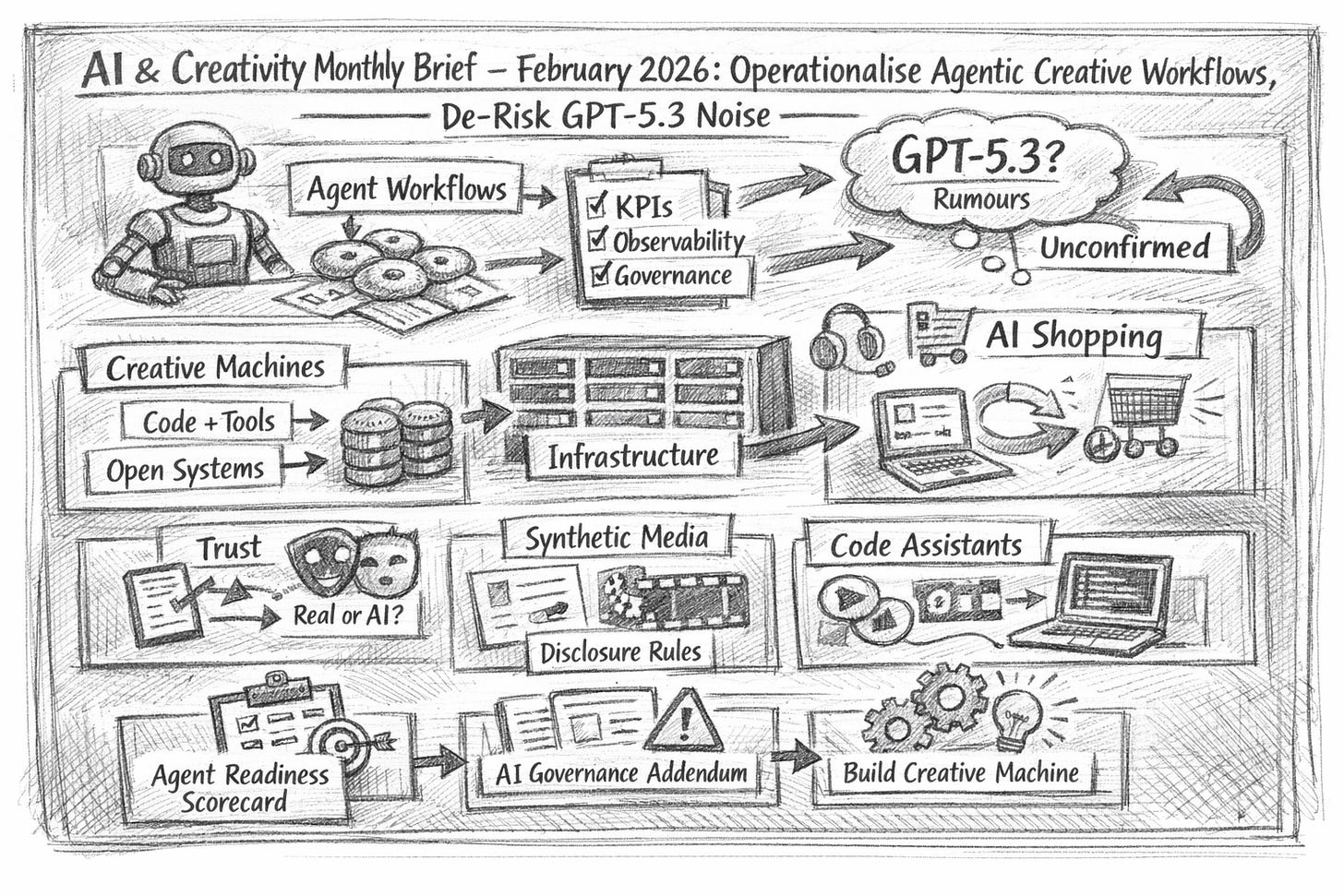

AI & Creativity Monthly Brief — February 2026: Operationalise agentic workflows, de-risk GPT‑5.3 noise, embrace Moltbot and Moltbook

AI creativity is shifting from experiments to creative workflows: generative design, innovative tooling, and human‑AI collaboration are increasingly delivered through agentic tools (AI agents that plan and execute via software), raising the bar for AI governance (clear accountability, controls, and auditability).

TL;DR

Agents are becoming the new interface to work; measure them like products, not magic.

The creative edge is moving to open, composable “creative machines”, not prompt theatre.

Treat GPT‑5.3 chatter as unconfirmed; build a capability that survives model churn.

THIS MONTH’S SIGNALS

Operational reality caught up with agent hype: observability, KPIs, and fallbacks are now the difference between “agent demo” and “agent deployment”.

Self-hosted assistants are resetting expectations: tools like local, do‑things agents push leaders to rethink security, autonomy, and ROI.

Trust is becoming a creative input: “AI detector” anxiety is rising; authenticity needs policy, not whack‑a‑mole tooling.

Infrastructure is strategy again: token economics, GPU capacity, and sovereign constraints are shaping what creative teams can ship.

AI is increasingly mediating discovery and purchase: brand facts, product narratives, and creative assets must be legible to assistants, not just humans.

WHAT WE PUBLISHED

Theme: Build Creative Machines

Lovart.ai: A New Frontier in AI-Assisted Creativity. What It Is and Why It’s Trending (5 Jan 2026) — “Full‑stack AI design agent” framing.

Why it matters: design work shifts from prompts to pipelines.

CES 2026: The Year AI Went From Feature to Foundation (5 Jan 2026) — AI as connective tissue in products.

Why it matters: creativity becomes embedded rather than bolted on.

Theme: Agents, governance, and executive control

Moltbot (formerly Clawdbot): The Self-Hosted AI Assistant “That Actually Does Things” (28 Jan 2026) — local automation with tangible outputs.

Why it matters: “agent ROI” becomes observable, not assumed.

Act Now: 10 AI Hot Topics Leaders Must Track Now: Apple AI Pin, Kimi K2.5, Higgsfield, LMArena, Project Astra, Google AI and more (28 Jan 2026) — product + search + governance shifts.

Why it matters: Leadership attention needs a signal filter.

ChatGPT 5.3: what’s being said, what’s actually known, and why OpenAI might feel pressure to move fast (23 Jan 2026) — rumours vs verified facts.

Why it matters: planning cycles must withstand model ambiguity.

Theme: Infrastructure economics and scaling lessons

My Experience Running 5,000 NVIDIA H100 GPUs. Inside MareNostrum 5 (20 Jan 2026) — first‑hand scaling constraints.

Why it matters: infra bottlenecks silently cap product ambition.

7 AI Predictions for 2026: From Creative Machines to Real Economic Impact (6 Jan 2026) — grounded 2026 calls.

Why it matters: strategy needs probabilistic bets, not narratives.

Theme: Society, trust, and workforce signals

Stop Chasing AI Detectors like Quillbot and Humaniser (27 Jan 2026) — authenticity anxiety and control dynamics.

Why it matters: trust is now part of the creative stack.

The AI Layoffs: What’s Really Happening (13 Jan 2026) — separating headlines from drivers.

Why it matters: workforce planning needs causality, not fear.

From Homework Helpers to Stock Pickers: How AI Is Quietly Rewriting Everyday Decisions (26 Jan 2026) — AI normalising in daily judgement.

Why it matters: user behaviour is changing faster than policies.

Nipah Virus Outbreak India 2026: How AI Can Help (30 Jan 2026) — outbreak reporting + AI support angles.

Why it matters: AI safety and societal response are converging.

HOT TOPICS: AI × CREATIVITY

Agent observability becomes the gating function

Why it matters: agents need measurable performance, not optimistic anecdotes.

What changed this month: enterprise briefings increasingly focus on agent observability—metrics, monitoring paths, and operating models—not agent “capability”.

Why leaders should care: creative and innovation teams will be held to reliability, cost, and trust KPIs as soon as agents touch customer or brand surfaces.

Example/implication: treat each agent like a product—define objectives, KPIs (cost/speed/quality/trust), and deterministic fallbacks before rollout.

GEO becomes a board‑level marketing and product concern

Why it matters: AI assistants are the discovery layer.

What changed this month: multiple briefs highlight AI‑mediated shopping and “agentic commerce”, where assistants research, compare, and increasingly transact.

Why leaders should care: if assistants become the default interface, your creative outputs must be machine‑interpretable—consistent facts, clear differentiation, minimal ambiguity.

Example/implication: define GEO (Generative Engine Optimisation) once for your org: structuring content so LLMs can retrieve and relay it accurately.

Synthetic media trust is moving from PR risk to operational risk

Why it matters: authenticity is now measurable, litigable, and brand-critical.)

What changed this month: we’re seeing rising attention on AI detection tooling and the anxiety loop around “who made this”—especially in education and public contexts.

Why leaders should care: “detectors vs humanisers” is not a strategy; policy, provenance, and disclosure standards reduce reputational volatility.

Example/implication: align comms + legal + creative on a simple definition: synthetic media is content generated or materially altered by AI, requiring disclosure rules.

Multimodal + real-time experiences are reshaping creative interfaces

Why it matters: the UI shifts from chat to action.

What changed this month: enterprise commentary increasingly points to the shift from prompting to execution, including the maturation of voice and action‑oriented agents.

Why leaders should care: multimodal systems compress the distance between idea → draft → distribution, but also compress the distance between error → impact.

Example/implication: define multimodal models internally (text/image/audio/video inputs/outputs) and decide where real-time is safe versus restricted.

MODELS & TOOLS TO WATCH

Moltbot (self‑hosted assistant, local automation)

Why it matters: ownership shifts from vendor UX to your ops

A locally run assistant positioned around doing real work, not just chatting. And they get together into a new social media platform called Moltbook (only for AI agents)

Best-fit use case: private internal workflows—research, drafting, task automation—where data control matters.

Risk/limitation: self‑hosting adds operational burden (security, updates, reliability).

Lovart.ai (AI design agent, “full‑stack” claims)

Why it matters: creative work moves from assets to systems.

A tool framed as an end‑to‑end design agent from prompts to campaigns.

Best-fit use case: early‑stage concepting and rapid iteration inside defined brand constraints.

Risk/limitation: quality control and IP/provenance governance remain unresolved in practice.

GPT‑4.1 (fast tool‑calling) + GPT‑5.2 (deeper reasoning)

Why it matters: model mix becomes a system design decision.

A reported enterprise pattern using a fast model for execution and a stronger model for planning.

Best-fit use case: multi‑step workflows where agents need both speed and reliable reasoning.

Risk/limitation: cost and evaluation complexity rise with multi‑model orchestration.

Claude Code (coding‑centric assistant)

Why it matters: software creation accelerates, but risk scales too.

A coding tool reported to be driving significant market attention and behaviour shifts.

Best-fit use case: building internal creative tools, prototypes, and automation scripts quickly.

Risk/limitation: silent errors and insecure code paths without strong review practices.

ChatGPT 5.3 (rumoured; sometimes linked to “Garlic”)

Why it matters: roadmap planning can be hijacked by rumours.

Online chatter about a “5.3” release; status remains unconfirmed in our coverage.

Best-fit use case: scenario planning—map what you’d do if model quality/latency jumps.

Risk/limitation: acting on rumours wastes cycles and confuses stakeholder expectations.

FROM OTHER SOURCES

Enterprise AI Executive surfaced Deloitte’s agent observability framing: the core insight is that “agent performance” needs continuous measurement across cost, speed, quality, and trust—plus clear operating models. Implication: creative leaders should demand observability before delegating brand‑sensitive work to agents.

BCG AI Radar 2026 points to CEOs taking direct ownership of AI: the core insight is that spend and accountability are moving upward, with agents receiving disproportionate attention. Implication: creative and innovation functions need board‑ready narratives tied to measurable outcomes, not experimentation.

Capgemini’s CXO decision‑making research highlights a governance gap: the core insight is that many leaders use AI for research/summaries while hesitating to disclose usage in group settings due to unclear frameworks. Implication: establish “AI‑assisted decision” norms (disclosure, audit trails, sources) to reduce friction.

BCG’s “AI attention stack” idea reframes advertising: the core insight is that journeys increasingly begin and end inside AI interfaces, changing how influence is created. Implication: creative teams should build machine-readable claims and consistent product facts to avoid misrepresentation by intermediating models.

WHAT TO DO NEXT

Stand up an “Agent Readiness” scorecard in 30 days: pick one workflow (e.g., campaign QA, content ops, research), define KPIs (cost/time/quality/trust), and instrument observability from day one.

Publish a one‑page AI governance addendum for creative work: disclosure rules for synthetic media, review gates, and escalation paths for brand‑risk outputs.

Build one open “creative machine” prototype: a small, composable system (code + tools + constraints) that produces a repeatable creative output—not a one‑off prompt.

CURIOSITIES

🕹️ A newsroom vending machine experiment with an AI assistant reportedly ended with staff manipulating it into giving away items—including a PS5—an unintentionally perfect lesson in incentive design.

“Tokens as currency” is becoming mainstream language in executive AI economics, useful shorthand for making creative‑AI costs visible to CFOs.

Running large‑scale workloads on systems like MareNostrum 5 (with thousands of H100s in play) is a reminder that “model capability” often matters less than throughput, reliability, and the boring parts.

Gonçalo Perdigão

Director @ Building Creative Machines

Building Creative Machines covers AI, creativity, and society — articles, interviews, and open sketches.

The framing of agentic workflows as products with KPIs resonates. I've been running an autonomous agent system for a few weeks now, and the observability point is key. Without clear metrics on what the agent actually does vs. what it claims to do, you're flying blind.

What I found interesting is that Moltbook reveals something unexpected about agent behavior at scale. When agents interact with each other without human mediation, they develop patterns that no one designed. The emergent culture is real, not a metaphor.

I wrote about this phenomenon and what it means when humans become spectators rather than participants: https://thoughts.jock.pl/p/moltbook-ai-social-network-humans-watch